I have been looking at BypassNewMeta and what it does is very simple while at the same time completely mind blowing!

class BypassNewMeta(type):

"Metaclass: casts `x` to this class, initializing with `_new_meta` if available"

def __call__(cls, x, *args, **kwargs):

if hasattr(cls, '_new_meta'): x = cls._new_meta(x, *args, **kwargs)

if cls!=x.__class__: x.__class__ = cls

return x

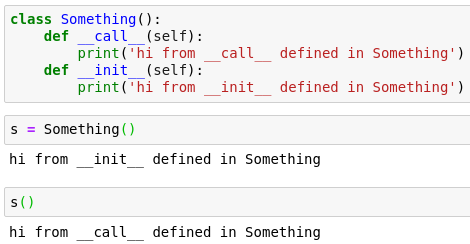

The straightforward behavior is that when we define __call__ on a class we can use an instance of that class to call it like we would a function.

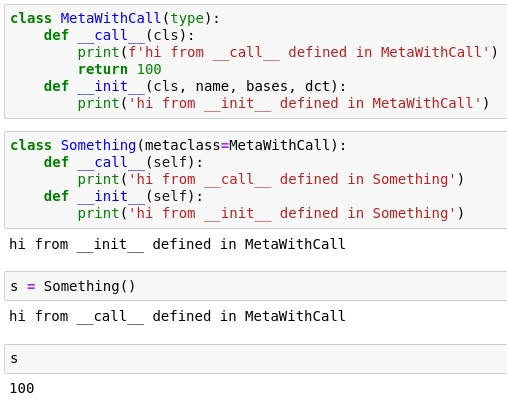

So far so good. But apparently a class being an instance of a metaclass exhibits same behavior:

One would think that Something() would create a new object of type Something and this indeed is the default behavior. But apparently you can override __call__ on the class (redefine it on the metaclass that the class is an instance of) and it can do something completely else rather than instantiating an object!