Interesting, I fixed that now. Thanks for the tip!

Why is fastai now importing for all applications from fastai.vision.all import * now. What is the use of all extension all which is not a file in fastai library which should be necessary for Python packaging?

I want to learn more about bb_pad function and why it is necessary, any reference?

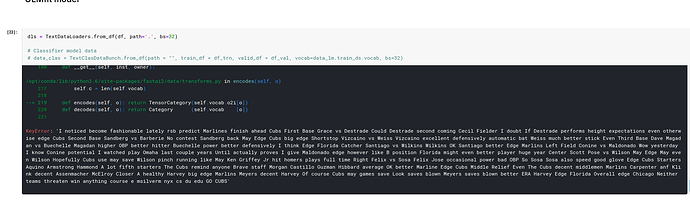

I am working with the TextBlock and there appears to be a logic bug to me (the best I can understand it).

I am looking at ‘TextBlock.from_folder(…)’ (from fastbook 10_nlp.ipynb) which calls Tokenizer.from_folder(path, **kwargs)’ to build a Tokenizer

Tokenizer.from_folder() seems to me to have an issue:

@classmethod

@delegates(tokenize_folder, keep=True)

def from_folder(cls, path, tok_func=SpacyTokenizer, rules=None, **kwargs):

path = Path(path)

output_dir = Path(ifnone(kwargs.get('output_dir'), path.parent/f'{path.name}_tok'))

if not output_dir.exists(): tokenize_folder(path, rules=rules, **kwargs)

res = cls(get_tokenizer(tok_func, **kwargs), counter=(output_dir/fn_counter_pkl).load(),

lengths=(output_dir/fn_lengths_pkl).load(), rules=rules, mode='folder')

res.path,res.output_dir = path,output_dir

return res

In “if not output_dir.exists(): tokenize_folder(path, rules=rules, **kwargs)” the tokenize_folder() is not being passed the tok_func and thus will be called with the default ‘tok_func=SpacyTokenizer’ parameter. The actual desired Tokenizer is then created in the next line “get_tokenizer(tok_func, **kwargs)” This mean the output_dir will always contain preprocessed tokenized files with the SpacyTokenizer regardless of the actual ‘tok_func’ passed. Should the tokenizer first be created and then passed to both tokenize_folder() and cls():

tokenizer = get_tokenizer(tok_func, **kwargs)

if not output_dir.exists(): tokenize_folder(path, tok_func=tok_func, rules=rules, **kwargs) #provide a way to pass either tokenizer or tok_func??

res = cls(tokenizer, counter=(output_dir/fn_counter_pkl).load(),

lengths=(output_dir/fn_lengths_pkl).load(), rules=rules, mode='folder')

I am not an expert, so please forgive me if I do not have this correct. It just seemed to be a logic error which would not necessarily throw an error, but would prevent using these functions for different tokenizer functions beyond SpacyTokenizer.

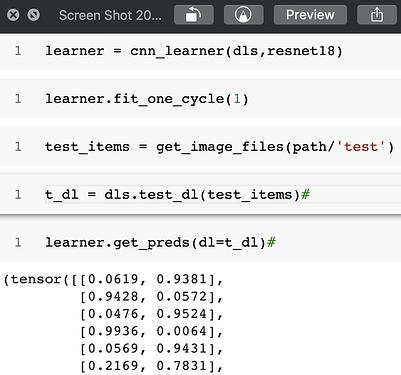

I trained a model on MNIST and looked at the predictions.

Now when i set

with_loss = True i get the following error.

---------------------------------------------------------------------------

IndexError Traceback (most recent call last)

/usr/local/lib/python3.6/dist-packages/fastai2/learner.py in _do_epoch_validate(self, ds_idx, dl)

174 self.dl = dl; self('begin_validate')

--> 175 with torch.no_grad(): self.all_batches()

176 except CancelValidException: self('after_cancel_validate')

29 frames

/usr/local/lib/python3.6/dist-packages/fastai2/learner.py in all_batches(self)

142 self.n_iter = len(self.dl)

--> 143 for o in enumerate(self.dl): self.one_batch(*o)

144

/usr/local/lib/python3.6/dist-packages/fastai2/learner.py in one_batch(self, i, b)

156 except CancelBatchException: self('after_cancel_batch')

--> 157 finally: self('after_batch')

158

/usr/local/lib/python3.6/dist-packages/fastai2/learner.py in __call__(self, event_name)

123

--> 124 def __call__(self, event_name): L(event_name).map(self._call_one)

125 def _call_one(self, event_name):

/usr/local/lib/python3.6/dist-packages/fastcore/foundation.py in map(self, f, *args, **kwargs)

371 else f.__getitem__)

--> 372 return self._new(map(g, self))

373

/usr/local/lib/python3.6/dist-packages/fastcore/foundation.py in _new(self, items, *args, **kwargs)

322 def _xtra(self): return None

--> 323 def _new(self, items, *args, **kwargs): return type(self)(items, *args, use_list=None, **kwargs)

324 def __getitem__(self, idx): return self._get(idx) if is_indexer(idx) else L(self._get(idx), use_list=None)

/usr/local/lib/python3.6/dist-packages/fastcore/foundation.py in __call__(cls, x, *args, **kwargs)

40

---> 41 res = super().__call__(*((x,) + args), **kwargs)

42 res._newchk = 0

/usr/local/lib/python3.6/dist-packages/fastcore/foundation.py in __init__(self, items, use_list, match, *rest)

313 if (use_list is not None) or not _is_array(items):

--> 314 items = list(items) if use_list else _listify(items)

315 if match is not None:

/usr/local/lib/python3.6/dist-packages/fastcore/foundation.py in _listify(o)

249 if isinstance(o, str) or _is_array(o): return [o]

--> 250 if is_iter(o): return list(o)

251 return [o]

/usr/local/lib/python3.6/dist-packages/fastcore/foundation.py in __call__(self, *args, **kwargs)

215 fargs = [args[x.i] if isinstance(x, _Arg) else x for x in self.pargs] + args[self.maxi+1:]

--> 216 return self.fn(*fargs, **kwargs)

217

/usr/local/lib/python3.6/dist-packages/fastai2/learner.py in _call_one(self, event_name)

126 assert hasattr(event, event_name)

--> 127 [cb(event_name) for cb in sort_by_run(self.cbs)]

128

/usr/local/lib/python3.6/dist-packages/fastai2/learner.py in <listcomp>(.0)

126 assert hasattr(event, event_name)

--> 127 [cb(event_name) for cb in sort_by_run(self.cbs)]

128

/usr/local/lib/python3.6/dist-packages/fastai2/callback/core.py in __call__(self, event_name)

23 (self.run_valid and not getattr(self, 'training', False)))

---> 24 if self.run and _run: getattr(self, event_name, noop)()

25 if event_name=='after_fit': self.run=True #Reset self.run to True at each end of fit

/usr/local/lib/python3.6/dist-packages/fastai2/callback/core.py in after_batch(self)

88 if self.with_loss:

---> 89 bs = find_bs(self.yb)

90 loss = self.loss if self.loss.numel() == bs else self.loss.view(bs,-1).mean(1)

/usr/local/lib/python3.6/dist-packages/fastai2/torch_core.py in find_bs(b)

479 "Recursively search the batch size of `b`."

--> 480 return item_find(b).shape[0]

481

/usr/local/lib/python3.6/dist-packages/fastai2/torch_core.py in item_find(x, idx)

465 "Recursively takes the `idx`-th element of `x`"

--> 466 if is_listy(x): return item_find(x[idx])

467 if isinstance(x,dict):

IndexError: tuple index out of range

During handling of the above exception, another exception occurred:

IndexError Traceback (most recent call last)

<ipython-input-99-1754a45985a2> in <module>()

----> 1 learner.get_preds(dl=t_dl,with_loss=True)

/usr/local/lib/python3.6/dist-packages/fastai2/learner.py in get_preds(self, ds_idx, dl, with_input, with_decoded, with_loss, act, inner, **kwargs)

217 for mgr in ctx_mgrs: stack.enter_context(mgr)

218 self(event.begin_epoch if inner else _before_epoch)

--> 219 self._do_epoch_validate(dl=dl)

220 self(event.after_epoch if inner else _after_epoch)

221 if act is None: act = getattr(self.loss_func, 'activation', noop)

/usr/local/lib/python3.6/dist-packages/fastai2/learner.py in _do_epoch_validate(self, ds_idx, dl)

176 except CancelValidException: self('after_cancel_validate')

177 finally:

--> 178 dl,*_ = change_attrs(dl, names, old, has); self('after_validate')

179

180 def fit(self, n_epoch, lr=None, wd=None, cbs=None, reset_opt=False):

/usr/local/lib/python3.6/dist-packages/fastai2/learner.py in __call__(self, event_name)

122 def ordered_cbs(self, cb_func): return [cb for cb in sort_by_run(self.cbs) if hasattr(cb, cb_func)]

123

--> 124 def __call__(self, event_name): L(event_name).map(self._call_one)

125 def _call_one(self, event_name):

126 assert hasattr(event, event_name)

/usr/local/lib/python3.6/dist-packages/fastcore/foundation.py in map(self, f, *args, **kwargs)

370 else f.format if isinstance(f,str)

371 else f.__getitem__)

--> 372 return self._new(map(g, self))

373

374 def filter(self, f, negate=False, **kwargs):

/usr/local/lib/python3.6/dist-packages/fastcore/foundation.py in _new(self, items, *args, **kwargs)

321 @property

322 def _xtra(self): return None

--> 323 def _new(self, items, *args, **kwargs): return type(self)(items, *args, use_list=None, **kwargs)

324 def __getitem__(self, idx): return self._get(idx) if is_indexer(idx) else L(self._get(idx), use_list=None)

325 def copy(self): return self._new(self.items.copy())

/usr/local/lib/python3.6/dist-packages/fastcore/foundation.py in __call__(cls, x, *args, **kwargs)

39 return x

40

---> 41 res = super().__call__(*((x,) + args), **kwargs)

42 res._newchk = 0

43 return res

/usr/local/lib/python3.6/dist-packages/fastcore/foundation.py in __init__(self, items, use_list, match, *rest)

312 if items is None: items = []

313 if (use_list is not None) or not _is_array(items):

--> 314 items = list(items) if use_list else _listify(items)

315 if match is not None:

316 if is_coll(match): match = len(match)

/usr/local/lib/python3.6/dist-packages/fastcore/foundation.py in _listify(o)

248 if isinstance(o, list): return o

249 if isinstance(o, str) or _is_array(o): return [o]

--> 250 if is_iter(o): return list(o)

251 return [o]

252

/usr/local/lib/python3.6/dist-packages/fastcore/foundation.py in __call__(self, *args, **kwargs)

214 if isinstance(v,_Arg): kwargs[k] = args.pop(v.i)

215 fargs = [args[x.i] if isinstance(x, _Arg) else x for x in self.pargs] + args[self.maxi+1:]

--> 216 return self.fn(*fargs, **kwargs)

217

218 # Cell

/usr/local/lib/python3.6/dist-packages/fastai2/learner.py in _call_one(self, event_name)

125 def _call_one(self, event_name):

126 assert hasattr(event, event_name)

--> 127 [cb(event_name) for cb in sort_by_run(self.cbs)]

128

129 def _bn_bias_state(self, with_bias): return bn_bias_params(self.model, with_bias).map(self.opt.state)

/usr/local/lib/python3.6/dist-packages/fastai2/learner.py in <listcomp>(.0)

125 def _call_one(self, event_name):

126 assert hasattr(event, event_name)

--> 127 [cb(event_name) for cb in sort_by_run(self.cbs)]

128

129 def _bn_bias_state(self, with_bias): return bn_bias_params(self.model, with_bias).map(self.opt.state)

/usr/local/lib/python3.6/dist-packages/fastai2/callback/core.py in __call__(self, event_name)

22 _run = (event_name not in _inner_loop or (self.run_train and getattr(self, 'training', True)) or

23 (self.run_valid and not getattr(self, 'training', False)))

---> 24 if self.run and _run: getattr(self, event_name, noop)()

25 if event_name=='after_fit': self.run=True #Reset self.run to True at each end of fit

26

/usr/local/lib/python3.6/dist-packages/fastai2/callback/core.py in after_validate(self)

96 if not self.save_preds: self.preds = detuplify(to_concat(self.preds, dim=self.concat_dim))

97 if not self.save_targs: self.targets = detuplify(to_concat(self.targets, dim=self.concat_dim))

---> 98 if self.with_loss: self.losses = to_concat(self.losses)

99

100 def all_tensors(self):

/usr/local/lib/python3.6/dist-packages/fastai2/torch_core.py in to_concat(xs, dim)

211 def to_concat(xs, dim=0):

212 "Concat the element in `xs` (recursively if they are tuples/lists of tensors)"

--> 213 if is_listy(xs[0]): return type(xs[0])([to_concat([x[i] for x in xs], dim=dim) for i in range_of(xs[0])])

214 if isinstance(xs[0],dict): return {k: to_concat([x[k] for x in xs], dim=dim) for k in xs[0].keys()}

215 #We may receives xs that are not concatenatable (inputs of a text classifier for instance),

IndexError: list index out of range

I see there is a note mentioned: #We may receives xs that are not concatenatable (inputs of a text classifier for instance),

And we have:

IndexError: tuple index out of range

During handling of the above exception, another exception occurred:

Now since the ctx_mgr has changed (loss_not_reduced() has been appended) removing with_loss=True is not sufficient i need to create my learner object again.

Not passing the tok_func is a mistake, I will fix. We can’t pass the tokenizer directly because we need to instantiate those on each subprocess doing the tokenization in parallel.

This should be moved to its own topic so we can discuss it more (I also need to investigate this closely and see if there is not a direct bug that is easily fixable but don’t have time to do this right now).

In general, this chat is great for announcements, but bug reports quickly get forgotten inside it, so their own topic coupled with a GitHub issue would probably be more suitable.

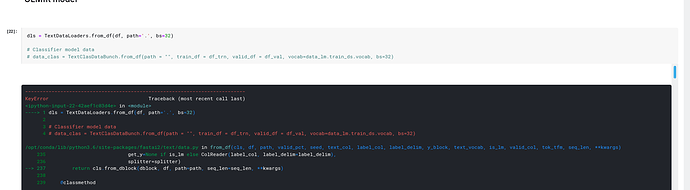

I have the same issue today. I use " pip3 install fastai2 " and it works very well on my laptop days ago. However, today when I tried to run " from fastai2.vision.all import * ", error occurs.

here is the error:

---------------------------------------------------------------------------

TypeError Traceback (most recent call last)

in

1 #hide

----> 2 from fastai2.vision.all import *

3 from utils import *

4

5 matplotlib.rc(‘image’, cmap=‘Greys’)

~/Projects/anaconda3/lib/python3.7/site-packages/fastai2/vision/all.py in

----> 1 from …basics import *

2 from …callback.all import *

3 from .augment import *

4 from .core import *

5 from .data import *

~/Projects/anaconda3/lib/python3.7/site-packages/fastai2/basics.py in

----> 1 from .data.all import *

2 from .optimizer import *

3 from .callback.core import *

4 from .learner import *

5 from .metrics import *

~/Projects/anaconda3/lib/python3.7/site-packages/fastai2/data/all.py in

1 from …torch_basics import *

----> 2 from .core import *

3 from .load import *

4 from .external import *

5 from .transforms import *

~/Projects/anaconda3/lib/python3.7/site-packages/fastai2/data/core.py in

114 # Cell

115 @docs

–> 116 class DataLoaders(GetAttr):

117 “Basic wrapper around several DataLoaders.”

118 _default=‘train’

~/Projects/anaconda3/lib/python3.7/site-packages/fastai2/data/core.py in DataLoaders()

127

128 def _set(i, self, v): self.loaders[i] = v

–> 129 train ,valid = add_props(lambda i,x: x[i], _set)

130 train_ds,valid_ds = add_props(lambda i,x: x[i].dataset)

131

~/Projects/anaconda3/lib/python3.7/site-packages/fastcore/utils.py in add_props(f, n)

530 def add_props(f, n=2):

531 “Create properties passing each of range(n) to f”

–> 532 return (property(partial(f,i)) for i in range(n))

533

534 # Cell

TypeError: ‘function’ object cannot be interpreted as an integer

~/Projects/anaconda3/lib/python3.7/site-packages/**fastcore**/utils.py in add_props(f, n)

530 def add_props(f, n=2):

The error seems to originate from fastcore. Try to update the fastcore package. If you have installed an editable version, you can pull the most recent version by running the command:

git pull. On my computer, I also need to run again pip install -e . . The 2 commands are run in the fastcore root folder.

As a good practice, always update both fastai2 and fastcore at the same time.

NB: For a better readability, When you embed the error stack trace in your message (like in your previous message), try to wrap it between ``` (3 back ticks) at the beginning and the end your source code, like this

```

~/Projects/anaconda3/lib/python3.7/site-packages/fastcore/utils.py in add_props(f, n)

530 def add_props(f, n=2):

531 “Create properties passing each of range(n) to f”

–> 532 return (property(partial(f,i)) for i in range(n))

```

and it will be displayed like this:

~/Projects/anaconda3/lib/python3.7/site-packages/fastcore/utils.py in add_props(f, n)

530 def add_props(f, n=2):

531 “Create properties passing each of range(n) to f”

–> 532 return (property(partial(f,i)) for i in range(n))

Is the signature of a class supposed to be the same as __init__?

For example Datasets? shows all args while Datasets.__init__? shows kwargs (and less args).

This does not happen with all the classes.

That’s because delegates only touches the signature of the class, not the signature of its init, I’d say.

Hi @kdorichev,

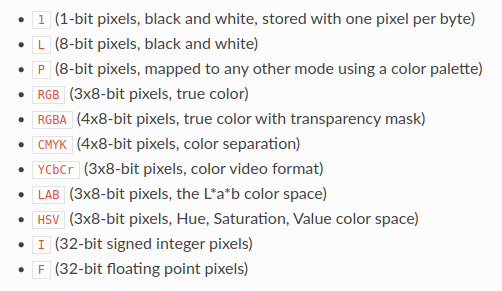

I’m trying to create a dataloader with X-ray lung images in reverse grayscale using PILcreate as follows:

batch_tfms = [IntToFloatTensor(), *aug_transforms(size=512), Normalize.from_stats(*imagenet_stats)]

dblock = DataBlock(blocks = (ImageBlock(cls=PILImageBW), CategoryBlock),

get_items = get_image_files,

get_y = parent_label,

splitter = RandomSplitter(),

item_tfms = Resize(1024),

batch_tfms= batch_tfms)

dls = dblock.dataloaders(path, bs=8)

I modified PILBase adding gray_r on cmap but it not seems to change:

class PILBase(Image.Image, metaclass=BypassNewMeta):

_bypass_type=Image.Image

_show_args = {'cmap':'gray_r'}

_open_args = {'mode': 'gray_r'}

@classmethod

def create(cls, fn:(Path,str,Tensor,ndarray,bytes), **kwargs)->None:

"Open an `Image` from path `fn`"

if isinstance(fn,TensorImage): fn = fn.permute(1,2,0).type(torch.uint8)

if isinstance(fn,Tensor): fn = fn.numpy()

if isinstance(fn,ndarray): return cls(Image.fromarray(fn))

if isinstance(fn,bytes): fn = io.BytesIO(fn)

return cls(load_image(fn, **merge(cls._open_args, kwargs)))

def show(self, ctx=None, **kwargs):

"Show image using `merge(self._show_args, kwargs)`"

return show_image(self, ctx=ctx, **merge(self._show_args, kwargs))

def __repr__(self): return f'{self.__class__.__name__} mode={self.mode} size={"x".join([str(d) for d in self.size])}'

Also tried dls.show_batch(cmap='Greys_r') but images are still not modified.

Any idea where the problem is?

First, I guess there’s no need to mention IntToFloatTensor as this is included in the ImageBlock

Secondly, you’re trying to modify _show_args of base class but if you look at the definition of PILImageBW

class PILImageBW(PILImage): _show_args,_open_args = {'cmap':'Greys'},{'mode': 'L'}

The class simply overrides those properties and hence your changes are lost. So instead of modifying base class, you should write your own Semantic Class, say PILImageBWR

class PILImageBWR(PILImage): _show_args,_open_args = {'cmap':'gray_r'},{'mode': 'L'}

and pass this as argument to ImageBlock. One more thing, _open_args will be passed in to PIL.Image.open() and I didn’t see any mode named gray_r. Source

How to dispatch a custom show_batch when I have multiple inputs?

For example, I have the following DataBlock on the PETs dataset:

dblock = DataBlock((ImageBlock, ImageBlock, CategoryBlock), get_items=get_image_files,

get_y=RegexLabeller(r'^.*[\/](.*)_\d+.jpg$'),

item_tfms=[Resize(128)])

The problem is that show_batch is already expecting something in the form x, y, samples, and in the given example the two inputs ends up inside a tuple, I tried something like:

from typing import Tuple

@typedispatch

def show_batch(x:Tuple[TensorImage, TensorImage], y, samples, **kwargs):

...

But that throws an error:

~/libs/fastcore/fastcore/dispatch.py in <listcomp>(.0)

50 "Find first matching type that is a super-class of `k`"

51 if k not in self.cache:

---> 52 types = [f for f in self.d if k==f or (isinstance(k,type) and issubclass(k,f))]

53 self.cache[k] = [self.d[o] for o in types]

54 return self.cache[k]

~/anaconda3/envs/dl/lib/python3.7/typing.py in __subclasscheck__(self, cls)

719 if cls._special:

720 return issubclass(cls.__origin__, self.__origin__)

--> 721 raise TypeError("Subscripted generics cannot be used with"

722 " class and instance checks")

723

TypeError: Subscripted generics cannot be used with class and instance checks

I know I can make a custom type for the two inputs with it’s custom show method (just like we did for the Siamese example) but I think that defeats the purpose of our DataBlock being able to handle multiple inputs/outputs (everything can be always be refactored as a single input-output problem anyways).

I think it would be a good idea (both for learning and for ease of use) to replicate the Siamase example using only standard blocks, it’s simply a (Image,Image)->Category problem

There is no other solution that creating a type for the tuple of image because python does not work with type annotations like Tuple[TensorImage, TensorImage] e.g. if you have a tuple of TensorImage,

isinstance(x, Tuple[TensorImage, TensorImage])

does not work. (Plus Tuple is also used in fastai for something else  )

)

We could create a special annotation type that would work specifically for this case and that we would manually check. Let me see if it’s hard to add or not.

Another solution could be to always expand the batch when sending to show_batch, then we could do something like:

@typedispatch

def show_batch(x1:TensorImage, x2:TensorImage, y, samples **kwargs):

...

I don’t know how well this goes with the current design though…

No that wouldn’t work since the general method always sends the input and target (as tuples if they are more than one). And the type dispatch system works with a given number of arguments not a flexible one.

New default behavior changed: Learner.get_preds no comes with reorder=True by default, which will reorder the predictions in the input order (for dataloaders that shuffle, or sort by length etc). It will even work for non-deterministic samplers (like a shuffled dataloader).

I got show_batch working on multiple axis. This approach has several limitations and I need your feedback on how can I improve this to much cleaner code.

@typedispatch

def show_batch(x:SiameseImage, y, samples, ctxs=None, max_n=6, nrows=None, ncols=4, figsize=None, **kwargs):

if figsize is None: figsize = (ncols*6, max_n//ncols * 3)

n = min(x[0].shape[0], max_n)

if ctxs is None: ctxs = get_grid(n*2, nrows=None, ncols=ncols, figsize=figsize)

for i in range(2):

ctxs[i::2] = [TensorImage(b).show(ctx=c, **kwargs) for b,c,_ in zip(x[i],ctxs[i::2], range(n))]

return ctxs

2 here is hardcoded because a Siamese pair has two images. We could generalize this block for more than two (I’m aiming for 5-6 in case of multiple segmentation masks). Few Doubts

- Why do I need to cast

btoTensorImagewhenexplode_types(x)has following output:

{__main__.SiameseImage: [fastai2.torch_core.TensorImage,

fastai2.torch_core.TensorImage,

torch.Tensor]}

- How can I label certain axes with an item coming out of batch? (say

samein this case). One possible solution came into my mind was:

ctxs[i::2] = [TensorImage(b).show(ctx=c,title=l.item() if i % 2 == 0 else None, **kwargs) for b,l,c,_ in zip(x[i],x[2],ctxs[i::2], range(n))]

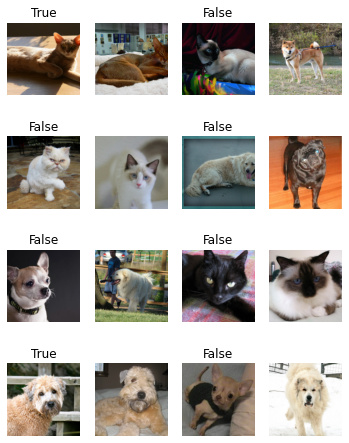

This was able to produce following output:

Any thoughts on using islice from itertools to pass in a list of ctxs to SiameseImage.show ?

EDIT: I guess I’ve the answer for 1. x and y hold batch of respective blocks, i.e. x:SiameseImage will hold 2 sets 64 images under TensorImage (bs=64), but when you’ll index into it, the underlying tensors will have no semantic types. However, samples hold 64 pairs of transformed input data, so samples[0] will have one pair of (TensorImage,TensorImage and bool), which is expected behavior. So, instead of indexing into x as I did above, we should do samples.itemgot(0).itemgot(i)

(‘0’ to access SiameseImage and then the next itemgot to access particular TensorImage out of tuple)