You need to pass is_lm=True so it knows it’s for a language model.

Hi @kshitijpatil09,

Thanks for the feedback. I tried to use

class PILImageBWR(PILImage): _show_args,_open_args = {'cmap':'gray_r'},{'mode': 'L'}

In ImageBlock(cls=PILImageBWR) in DataBlock but dls.show_batch() still returns the images unchanged. Moreover, changind cmap to whatever arbitrary name (like cmap=foo) does not throw any error. However, changing mode does throw an error. Could it be something else changing cmap in DataBlock?

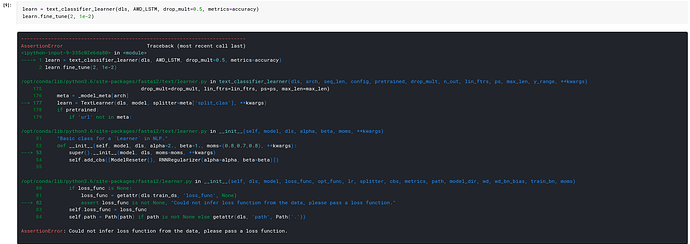

I have been trying to convert a language model from fastaiv1 to fastai2. on running text_classifier_learner, it’s asking for loss function. While the tutorial example notebook, need not pass any loss function for IMDB dataset

Hey, you also need to define the PILImageBWR Tensor class, like so:

class PILImageBWR(PILImage): _show_args,_open_args = {'cmap':'gray_r'},{'mode': 'L'}

class TensorImageBWR(TensorImage): _show_args = PILImageBWR._show_args

PILImageBWR._tensor_cls = TensorImageBWR

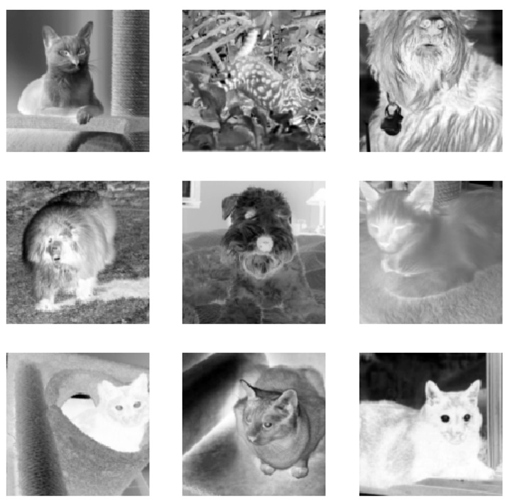

I then tested the above in the Pets dataset:

dblock = DataBlock((ImageBlock(PILImageBWR),), get_items=get_image_files,

item_tfms=[Resize(128)])

dls = dblock.dataloaders(source)

dls.show_batch()

Btw, @sgugger why are we using Greys colormap in PILImageBW instead of gray?

Greys lead to inverted black and white images, like shown above.

We use this default for MNIST.

That’s true, I’ve created a custom class to only make it ‘gray’. I feel this a redundant code to just change ‘cmap’ of particular type, but seems like we don’t have any option.

@Joan I missed this part

EDIT: One doubt I’ve with this approach, say I want to have all the perquisites of PILMask except the cmap, then defining our own class as above won’t mess with transforms defined for TensorMask?

for instance, IntToFloatTensor or Resize defined for TensorMask would work with my classes?

Yeah, and I think gray would be the desired choice for a wider range of tasks.

Is it a good idea to change the default to gray and make a custom class when dealing with MNIST?

Btw, you can easily change the colormap without having to create a new class by doing:

PILImageBW._show_args['cmap'] = 'gray'

I made some progress with show_batch method to work with labels as well. Earlier, I was passing it as title to b.show() but now labels can have their own show definition as well

@typedispatch

def show_batch(x:SiameseImage, y, samples, ctxs=None, max_n=6, nrows=None, ncols=4, figsize=None, **kwargs):

n = min(x[0].shape[0], max_n)

if ctxs is None: ctxs = get_grid(n*2, nrows=None, ncols=ncols, figsize=figsize)

xiter = samples.itemgot(0)

def _show(b,l,c,show_label=False, **kwargs):

c = b.show(ctx=c, **kwargs)

if show_label: c = l.show(ctx=c, **kwargs)

return c

for i in range(2):

ctxs[i::2] = [_show(b,l,c,i%2==0) for b,l,c,_ in zip(xiter.itemgot(i),samples.itemgot(1),ctxs[i::2], range(n))]

return ctxs

Now this works with MultiCategoryBlock or any similar custom label definition. The show_label condition (i%2==0) could be changed to m with m images in a tuple and label will be shown on every m^{th} axis

It won’t, the transforms are still applied to all subclasses of TensorMask thanks to typedispatch.

I’ve written a very quick introduction to typedispatch here

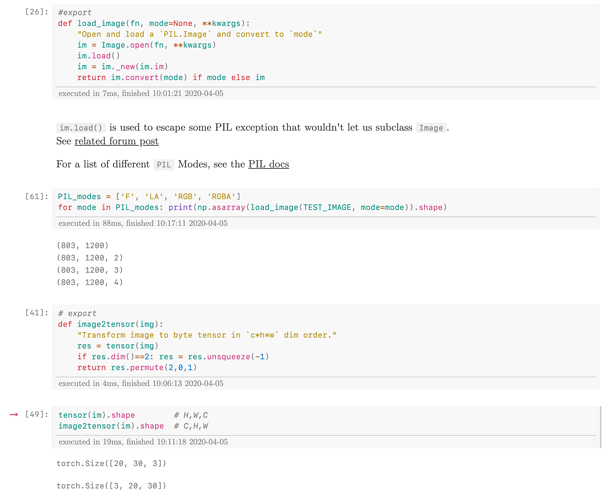

Following a little bit the issue. I am trying to add histogram equialization for the images I am working in adding a line in load_image like;

def load_image_eq(fn, mode=None, **kwargs):

"Open and load a `PIL.Image` and convert to `mode`"

im = Image.open(fn, **kwargs)

im.load()

im = im._new(im.im)

im = ImageOps.equalize(im, mask = None) ###Added to perform histogram equalization

return im.convert(mode) if mode else im

I am creating a new class for it:

class PILBase_eq(Image.Image, metaclass=BypassNewMeta):

_bypass_type=Image.Image

_show_args = {'cmap':'gray'}

_open_args = {'mode': 'L'}

@classmethod

def create(cls, fn:(Path,str,Tensor,ndarray,bytes), **kwargs)->None:

"Open an `Image` from path `fn`"

if isinstance(fn,TensorImage): fn = fn.permute(1,2,0).type(torch.uint8)

if isinstance(fn,Tensor): fn = fn.numpy()

if isinstance(fn,ndarray): return cls(Image.fromarray(fn))

if isinstance(fn,bytes): fn = io.BytesIO(fn)

return cls(load_image_eq(fn, **merge(cls._open_args, kwargs)))

def show(self, ctx=None, **kwargs):

"Show image using `merge(self._show_args, kwargs)`"

return show_image(self, ctx=ctx, **merge(self._show_args, kwargs))

def __repr__(self): return f'{self.__class__.__name__} mode= {self.mode} size={"x".join([str(d) for d in self.size])}'

class PILImage_eq(PILBase_eq): pass

class TensorImage_eq(TensorImage): _show_args = PILImage_eq._show_args

PILImage_eq._tensor_cls = TensorImage_eq

However, when trying to generate the dataloader I got the following error:

dls = dblock.dataloaders(path, bs=8)

dls.show_batch()

Could not do one pass in your dataloader, there is something wrong in it

---------------------------------------------------------------------------

TypeError Traceback (most recent call last)

<ipython-input-29-f74dbf2b72eb> in <module>

1 dls = dblock.dataloaders(path, bs=8)

----> 2 dls.show_batch()

~/anaconda3/envs/fastai2/lib/python3.7/site-packages/fastai2/data/core.py in show_batch(self, b, max_n, ctxs, show, **kwargs)

88

89 def show_batch(self, b=None, max_n=9, ctxs=None, show=True, **kwargs):

---> 90 if b is None: b = self.one_batch()

91 if not show: return self._pre_show_batch(b, max_n=max_n)

92 show_batch(*self._pre_show_batch(b, max_n=max_n), ctxs=ctxs, max_n=max_n, **kwargs)

~/anaconda3/envs/fastai2/lib/python3.7/site-packages/fastai2/data/load.py in one_batch(self)

129 def one_batch(self):

130 if self.n is not None and len(self)==0: raise ValueError(f'This DataLoader does not contain any batches')

--> 131 with self.fake_l.no_multiproc(): res = first(self)

132 if hasattr(self, 'it'): delattr(self, 'it')

133 return res

~/anaconda3/envs/fastai2/lib/python3.7/site-packages/fastcore/utils.py in first(x)

174 def first(x):

175 "First element of `x`, or None if missing"

--> 176 try: return next(iter(x))

177 except StopIteration: return None

178

~/anaconda3/envs/fastai2/lib/python3.7/site-packages/fastai2/data/load.py in __iter__(self)

95 self.randomize()

96 self.before_iter()

---> 97 for b in _loaders[self.fake_l.num_workers==0](self.fake_l):

98 if self.device is not None: b = to_device(b, self.device)

99 yield self.after_batch(b)

~/anaconda3/envs/fastai2/lib/python3.7/site-packages/torch/utils/data/dataloader.py in __next__(self)

343

344 def __next__(self):

--> 345 data = self._next_data()

346 self._num_yielded += 1

347 if self._dataset_kind == _DatasetKind.Iterable and \

~/anaconda3/envs/fastai2/lib/python3.7/site-packages/torch/utils/data/dataloader.py in _next_data(self)

383 def _next_data(self):

384 index = self._next_index() # may raise StopIteration

--> 385 data = self._dataset_fetcher.fetch(index) # may raise StopIteration

386 if self._pin_memory:

387 data = _utils.pin_memory.pin_memory(data)

~/anaconda3/envs/fastai2/lib/python3.7/site-packages/torch/utils/data/_utils/fetch.py in fetch(self, possibly_batched_index)

32 raise StopIteration

33 else:

---> 34 data = next(self.dataset_iter)

35 return self.collate_fn(data)

36

~/anaconda3/envs/fastai2/lib/python3.7/site-packages/fastai2/data/load.py in create_batches(self, samps)

104 self.it = iter(self.dataset) if self.dataset is not None else None

105 res = filter(lambda o:o is not None, map(self.do_item, samps))

--> 106 yield from map(self.do_batch, self.chunkify(res))

107

108 def new(self, dataset=None, cls=None, **kwargs):

~/anaconda3/envs/fastai2/lib/python3.7/site-packages/fastai2/data/load.py in do_batch(self, b)

125 def create_item(self, s): return next(self.it) if s is None else self.dataset[s]

126 def create_batch(self, b): return (fa_collate,fa_convert)[self.prebatched](b)

--> 127 def do_batch(self, b): return self.retain(self.create_batch(self.before_batch(b)), b)

128 def to(self, device): self.device = device

129 def one_batch(self):

~/anaconda3/envs/fastai2/lib/python3.7/site-packages/fastai2/data/load.py in create_batch(self, b)

124 def retain(self, res, b): return retain_types(res, b[0] if is_listy(b) else b)

125 def create_item(self, s): return next(self.it) if s is None else self.dataset[s]

--> 126 def create_batch(self, b): return (fa_collate,fa_convert)[self.prebatched](b)

127 def do_batch(self, b): return self.retain(self.create_batch(self.before_batch(b)), b)

128 def to(self, device): self.device = device

~/anaconda3/envs/fastai2/lib/python3.7/site-packages/fastai2/data/load.py in fa_collate(t)

44 b = t[0]

45 return (default_collate(t) if isinstance(b, _collate_types)

---> 46 else type(t[0])([fa_collate(s) for s in zip(*t)]) if isinstance(b, Sequence)

47 else default_collate(t))

48

~/anaconda3/envs/fastai2/lib/python3.7/site-packages/fastai2/data/load.py in <listcomp>(.0)

44 b = t[0]

45 return (default_collate(t) if isinstance(b, _collate_types)

---> 46 else type(t[0])([fa_collate(s) for s in zip(*t)]) if isinstance(b, Sequence)

47 else default_collate(t))

48

~/anaconda3/envs/fastai2/lib/python3.7/site-packages/fastai2/data/load.py in fa_collate(t)

45 return (default_collate(t) if isinstance(b, _collate_types)

46 else type(t[0])([fa_collate(s) for s in zip(*t)]) if isinstance(b, Sequence)

---> 47 else default_collate(t))

48

49 # Cell

~/anaconda3/envs/fastai2/lib/python3.7/site-packages/torch/utils/data/_utils/collate.py in default_collate(batch)

79 return [default_collate(samples) for samples in transposed]

80

---> 81 raise TypeError(default_collate_err_msg_format.format(elem_type))

TypeError: default_collate: batch must contain tensors, numpy arrays, numbers, dicts or lists; found <class '__main__.PILImage_eq'>

I see there’s a # TODO: docs in nbs/07_vision.core.ipynb

I’m a bit unclear on how exactly the documentation should look like. Does something like this work?:

There’s no need to modify base class for this. Your operation has to be performed on PIL.Image and item level representation of images is PIL.Image only. So you can simply put this in item_tfms like so:

pipe = Pipeline([PILImage.create, ImageOps.equalize]) #pipeline

ds = Datasets(items, tfms=pipe) #datasets

def HImageBlock(): # For DataBlock

return TransformBlock(type_tfms=[PILImage.create,ImageOps.equalize], batch_tfms=IntToFloatTensor)

For the documentation, please refer to this topic. We don’t need help for notebooks where we haven’t done the documentation yet.

The result of amazing work @boris has been doing for a while means you need to make sure you have the latest fastcore to run fastai2 (as is always good practice). So if you see an error log_args is not defined while trying to use fastai2, make sure you have your editable install of fastcore and are up to date with master.

@log_args(but=‘dls,model’)

what is the functionality of log_args ?

thanks

It adds the args you used into self.init_args.

The idea is to easily debug when you have a problem by seeing all the args that have been used.

Also it can be used by logging callbacks (eg WandbCallback) to be automatically logged as config parameters.

It is going to be really sweet once finished, I’m pretty excited about it

I see this error too and I am installing like this:

pip install git+https://github.com/fastai/fastai2.git

pip install git+https://github.com/fastai/fastcore.gitSolved with next order:

pip install git+https://github.com/fastai/fastcore.git@master

pip install git+https://github.com/fastai/fastai2.git@master

@sgugger maybe it is a good idea to update the FAQ because it has the order that caused me the error. Fastai-v2 FAQ and links (read this before posting please!)