Hi shruti_01 hope you are having a fun day! I am currently following this course A walk with fastai2 - Study Group and Online Lectures Megathread run by muellerzr.

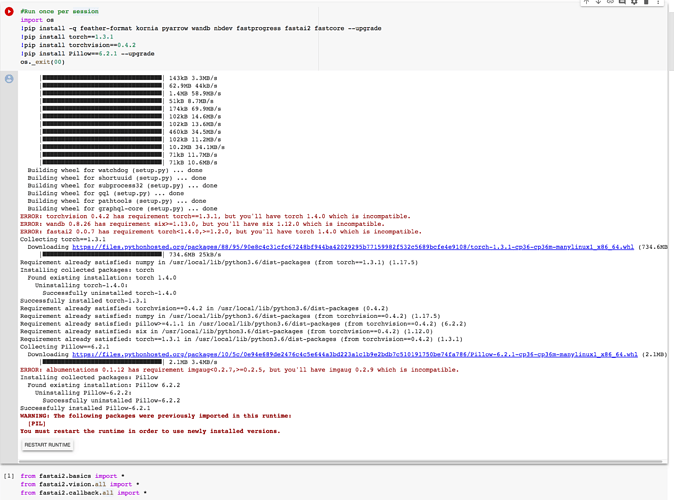

If you look at the start of the notebooks we do some uninstalling of libraries so that we can import the fastai2 modules successfully. It looks like you could be having a similar issue.

Maybe this image can help you resolve your issue.

Cheers mrfabulous1