Hello!

I’m currently developing in a Jetson Xavier using FastAI for a classification task.

I’ve already trained my model using FastAI on a desktop machine and exported it into pickle format, then uploaded it into the Jetson.

Currently using a resnet34 model.

I’m having 2 issues.

First one is inference time, tried running the model on cpu and it was taking around 0.25 seconds per iteration (Only predicting one image), after that i checked if it was using gpu and forced it, i saw an improvement of 0.15 - 0-18 sec per detection, i went further and used the to_fp16 method on the learner and boosted the performance to 0.09 - 0.11. I tested the model only using pytorch and even exporting the pytorch model to tensorrt and it went 10 times faster (I’m not having correct predictions, i’m guessing fastai does some pre processing on the image before but couldn’t check the code yet,).

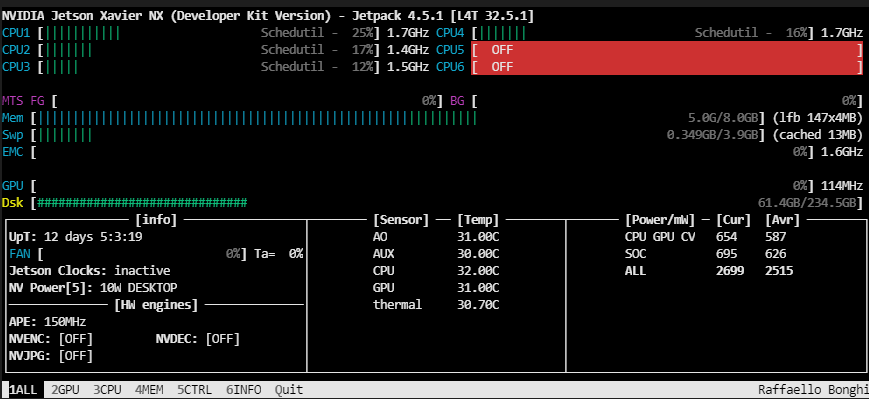

Second issue is RAM usage (I read on some forums that Jetson Xavier shares CPU and GPU memory, but still it’s using like 4 GB when loading an extra 1 GB after the first inference (Which takes around 30 seconds for some reason, maybe allocating cuda?)

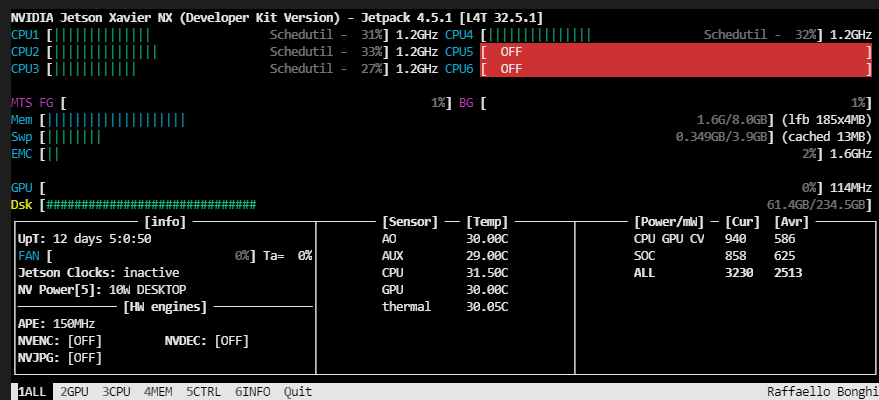

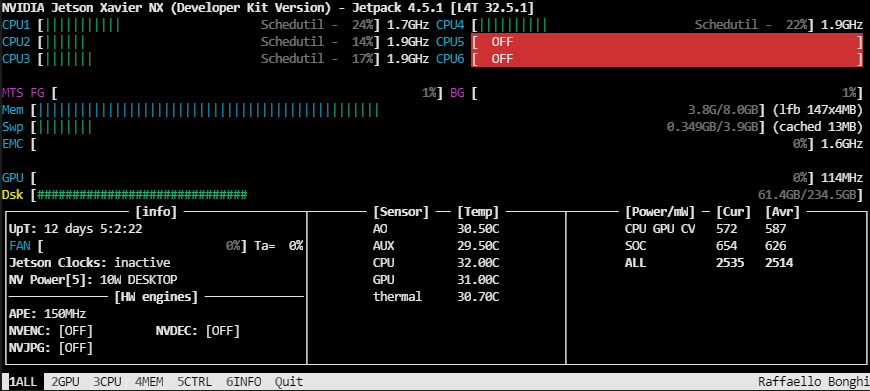

Here are some pictures of RAM usage before and after loading the model

Before

Model Loaded

After the first inference

Having such a big RAM usage is an issue for me due to this model only being 10% of a big pipeline. As well as in the pipeline the performance of the model goes from the mentioned inference times to 0.5 to 1 second per image.

Thanks a lot in advance, any help is appreciated.