Introduction and Problem Description

In lectures 8 and 9, we discussed the problem of transferring the style from one image to another. The problem of style transfer in the context of language is natural follow-up question.

Let us define the goals before we think more about the problem. What does it even mean to transfer the style from one body of text to another?

I think there are primarily 3 ways in which one could transform the style of a given piece of text. Let’s fix on the notations before listing these.

Let T_in be the given input text, and C be the corpus from which the style has to be transferred to create T_C, the output text with the style derived from C.

1. Lexical transfer

- Substitute some words in T_in with synonyms from C. The synonyms in this case don’t have to be words that have a 1 to 1 mapping with the meaning, but can be something that just fits in the context. It is tempting to conclude that one can learn a mapping of synonyms from C to T_in. However, that boils down to a simpler version of the problem of translation.

2. Grammar transfer

- Rearrange the words in T_in to be compliant with the grammatical structure of C. For example, if C was a set of sentences generated by Yoda, we expect the output to follow an object-subject-verb order. Making sense, am I?

3. Semantics transfer

- Completely change the text, but preserve the meaning. I think this reduces to the problem of translation.

A First Attempt at Lexical Transfer

I have taken a stab at the textual style transfer of the 1st kind as described above. Recall that T_in is the input corpus, and C is the style corpus from which the style has to be transferred to T_in.

The high-level idea is as follows:

-

We train a generative model, G on the given corpus C. G is thus capable of generating sequences of text that (hopefully) appear to be sampled from C.

-

Preprocess T_in and delete some of the words. Let the processed input be T_in’.

-

Feed T_in’ to G from left to right. G will now start “filling the blanks”, with the words still present in T_in guiding the generated words. To borrow terminology from lectures 8 and 9, the generator G will be responsible for generating the style, and the non-deleted words will provide the content.

Experiments

As the generator, G, I used a single LSTM, adopted from one of the Keras examples (with some changes to the model parameters). Two different corpora were used for training G.

Input text (T_in)

“IF WINTER comes,” the poet Shelley asked, “can Spring be far behind?” For the best part of a decade the answer as far as the world economy has been concerned has been an increasingly weary “Yes it can”. Now, though, after testing the faith of the most patient souls with glimmers that came to nothing, things seem to be warming up. It looks likely that this year, for the first time since 2010, rich-world and developing economies will put on synchronised growth spurts.

(Taken from this article)

T_in’

_The words that have been removed are replaced with a .

“IF WINTER comes”, the poet Shelley asked, “can Spring be _ behind?” For the best part of a decade the _ as far as the world economy has been concerned has been an _ weary “Yes it can”. Now, though, after testing the faith of the most _ souls with _ that came to nothing, things seem to be warming up. It looks _ that this year, for the _ time since 2010, rich-world and _ economies will put on synchronised growth spurts."

C = Nietzsche

(G trained using works of Nietzsche)

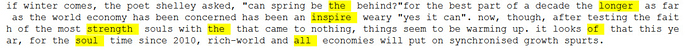

#####T_Nietzsche

C = Shakespeare

(G trained using works of Shakespeare)

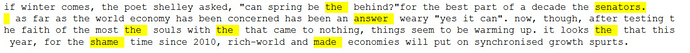

#####T_Shakespeare

code.

Todo

I was expecting better results. Some of the top items in my todo list include:

-

Tweaking the model

-

Being smarter about the words to be removed from T_in. Perhaps only those words should be removed that have a context that’s likely to be present in C?

-

Can the GAN framework help here?

-

Explore transfer of the second kind (using parts of speech, perhaps).

Please share any feedback on the approach or pointers to any related work.

If you are attending the course in person, are interested in an NLP project, and would be interested in extending this work, please feel free to ping me; we can work on it together.

Thanks for reading.

:

: