Hi,

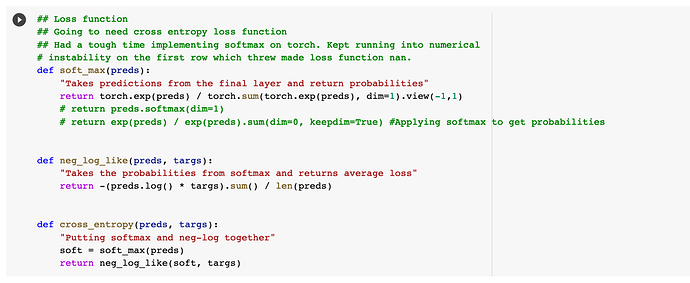

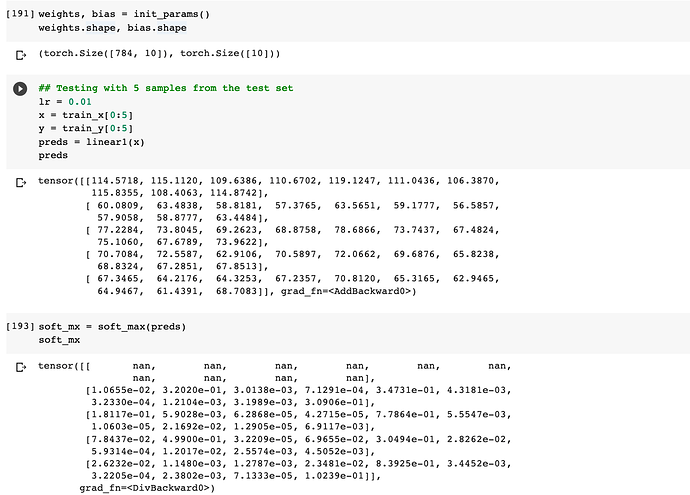

I am implementing softmax as a formula and I get nan on the first row consistently. This results in the loss being nan as well. I tried adding a small number to the numerator, which did not help. Using nn.softmax resolves the problem and the loss values is a scalar as well. The formula implementation works fine while using seperately with random numbers. I wonder if it has to do with numerical instability. I have attached couple of images to demonstrate the code.

Thank you for the help!

I imagine because e^115 is very, very big.

Very true.

Hey ishan,

You just found out why it’s common to subtract the maximum before doing the exp - to prevent numerical explosion. Just add a first line in softmax saying something like preds -= preds.max() and you should be good to go

That makes sense. Thank you very much!

@orendar

1 Like