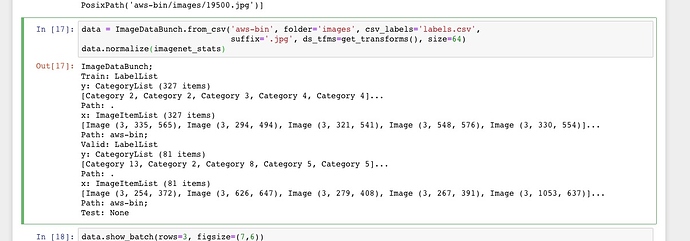

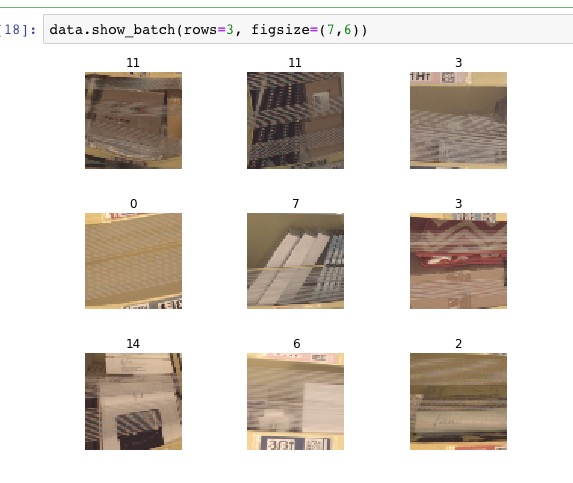

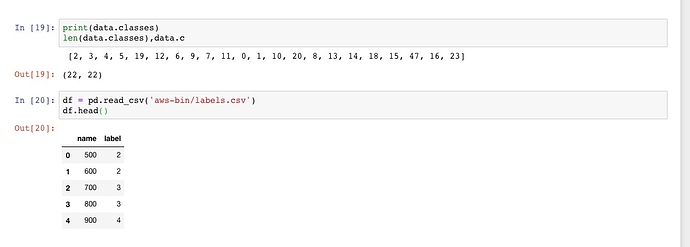

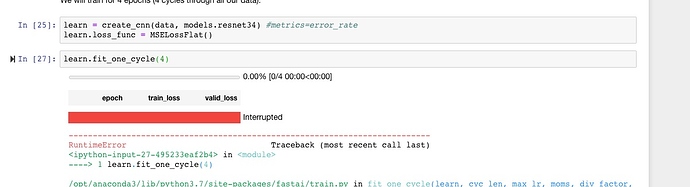

I was previously using the MSELossFlat() function to create a learner for a scalar (basically, to do regression using an image). I updated my FastAI v1 library today and had to replace ImageDataset with ImageClassificationDataset, but now I am getting a dimensional error when I try to find my learning rate. Is there a different Image*Dataset I should be using with the latest version of the library?

LR Finder complete, type {learner_name}.recorder.plot() to see the graph.

---------------------------------------------------------------------------

RuntimeError Traceback (most recent call last)

<ipython-input-24-e10d9f8adb8f> in <module>()

----> 1 learn2.lr_find(start_lr=1e-5, end_lr=100)

2 learn2.recorder.plot()

/app/fastai/fastai/train.py in lr_find(learn, start_lr, end_lr, num_it, stop_div, **kwargs)

28 cb = LRFinder(learn, start_lr, end_lr, num_it, stop_div)

29 a = int(np.ceil(num_it/len(learn.data.train_dl)))

---> 30 learn.fit(a, start_lr, callbacks=[cb], **kwargs)

31

32 def to_fp16(learn:Learner, loss_scale:float=512., flat_master:bool=False)->Learner:

/app/fastai/fastai/basic_train.py in fit(self, epochs, lr, wd, callbacks)

160 callbacks = [cb(self) for cb in self.callback_fns] + listify(callbacks)

161 fit(epochs, self.model, self.loss_func, opt=self.opt, data=self.data, metrics=self.metrics,

--> 162 callbacks=self.callbacks+callbacks)

163

164 def create_opt(self, lr:Floats, wd:Floats=0.)->None:

/app/fastai/fastai/basic_train.py in fit(epochs, model, loss_func, opt, data, callbacks, metrics)

92 except Exception as e:

93 exception = e

---> 94 raise e

95 finally: cb_handler.on_train_end(exception)

96

/app/fastai/fastai/basic_train.py in fit(epochs, model, loss_func, opt, data, callbacks, metrics)

82 for xb,yb in progress_bar(data.train_dl, parent=pbar):

83 xb, yb = cb_handler.on_batch_begin(xb, yb)

---> 84 loss = loss_batch(model, xb, yb, loss_func, opt, cb_handler)

85 if cb_handler.on_batch_end(loss): break

86

/app/fastai/fastai/basic_train.py in loss_batch(model, xb, yb, loss_func, opt, cb_handler)

20

21 if not loss_func: return to_detach(out), yb[0].detach()

---> 22 loss = loss_func(out, *yb)

23

24 if opt is not None:

/usr/local/lib/python3.6/dist-packages/torch/nn/modules/module.py in __call__(self, *input, **kwargs)

475 result = self._slow_forward(*input, **kwargs)

476 else:

--> 477 result = self.forward(*input, **kwargs)

478 for hook in self._forward_hooks.values():

479 hook_result = hook(self, input, result)

/app/fastai/fastai/layers.py in forward(self, input, target)

101 "Same as `nn.MSELoss`, but flattens input and target."

102 def forward(self, input:Tensor, target:Tensor) -> Rank0Tensor:

--> 103 return super().forward(input.view(-1), target.view(-1))

104

105 def simple_cnn(actns:Collection[int], kernel_szs:Collection[int]=None,

/usr/local/lib/python3.6/dist-packages/torch/nn/modules/loss.py in forward(self, input, target)

422

423 def forward(self, input, target):

--> 424 return F.mse_loss(input, target, reduction=self.reduction)

425

426

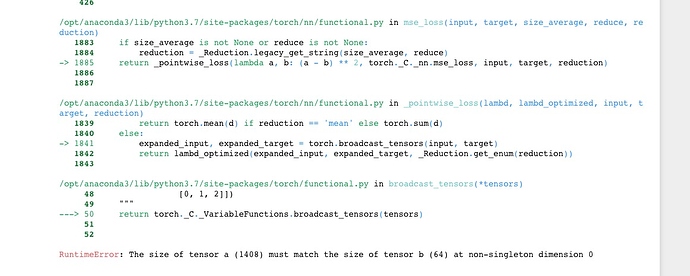

/usr/local/lib/python3.6/dist-packages/torch/nn/functional.py in mse_loss(input, target, size_average, reduce, reduction)

1830 if size_average is not None or reduce is not None:

1831 reduction = _Reduction.legacy_get_string(size_average, reduce)

-> 1832 return _pointwise_loss(lambda a, b: (a - b) ** 2, torch._C._nn.mse_loss, input, target, reduction)

1833

1834

/usr/local/lib/python3.6/dist-packages/torch/nn/functional.py in _pointwise_loss(lambd, lambd_optimized, input, target, reduction)

1786 return torch.mean(d) if reduction == 'mean' else torch.sum(d)

1787 else:

-> 1788 expanded_input, expanded_target = torch.broadcast_tensors(input, target)

1789 return lambd_optimized(expanded_input, expanded_target, _Reduction.get_enum(reduction))

1790

/usr/local/lib/python3.6/dist-packages/torch/functional.py in broadcast_tensors(*tensors)

48 [0, 1, 2]])

49 """

---> 50 return torch._C._VariableFunctions.broadcast_tensors(tensors)

51

52

RuntimeError: The size of tensor a (58880) must match the size of tensor b (128) at non-singleton dimension 0

The learner:

from fastai import *

from fastai.vision import *

import torchvision.models as tvmodels

class ImageScalarDataset(ImageClassificationDataset):

def __init__(self, df:DataFrame, path_column:str='file_path', dependent_variable:str=None):

# The superclass does nice things for us like tensorizing the numpy

# input

super().__init__(df[path_column], np.array(df[dependent_variable], dtype=np.float32))

# Old FastAI uses loss_fn, new FastAI uses loss_func

self.loss_func = layers.MSELossFlat()

self.loss_fn = self.loss_func

# We have only one "class" (i.e., the single output scalar)

self.classes = [0]

def __len__(self)->int:

return len(self.y)

def __getitem__(self, i):

# return x, y | where x is an image, and y is the scalar

return open_image(self.x[i]), self.y[i]

data64 = ImageDataBunch.create(dat_train, dat_valid, dat_test,

ds_tfms=get_transforms(),#do_flip=False),

bs=128,

size=64)

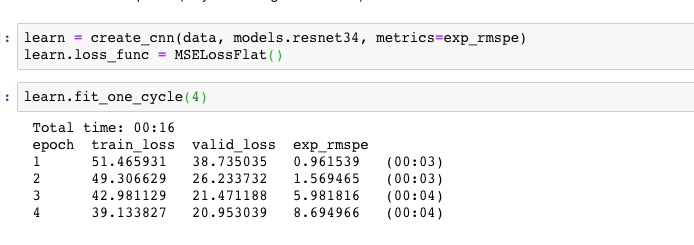

learn2 = create_cnn(data64,

tvmodels.densenet121,

pretrained=True,

metrics=[exp_rmspe],

ps=0.5,

callback_fns=ShowGraph)