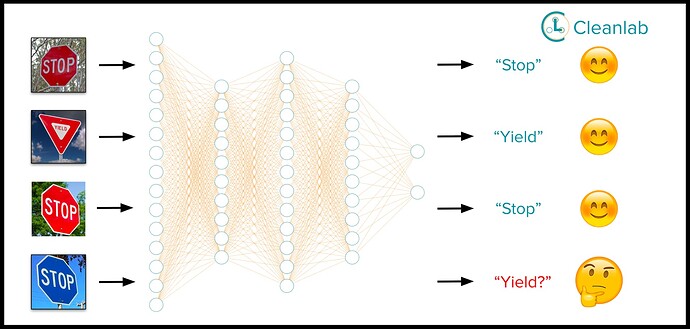

We all know that reliability is the Achilles’ heel of modern ML, as predictions are often wrong for out-of-distribution (OOD) inputs that do not resemble previous training data. While scientists have proposed many complex OOD detection algorithms for specific data types, our latest research demonstrates straightforward methods that work just as effectively and work directly with any type of data for which either a feature embedding or trained classifier is available.

We’ve open-sourced our methods with easy tutorials and published lots of content describing them and benchmarking their performance for detecting OOD images. Hope this helps make your ML more trustworthy!

Blog: Out-of-Distribution Detection via Embeddings or Predictions

5min tutorial code: Detect Outliers with Cleanlab and PyTorch Image Models (timm) - cleanlab

Paper: [2207.03061] Back to the Basics: Revisiting Out-of-Distribution Detection Baselines

Github: GitHub - cleanlab/cleanlab: The standard data-centric AI package for data quality and machine learning with messy, real-world data and labels.