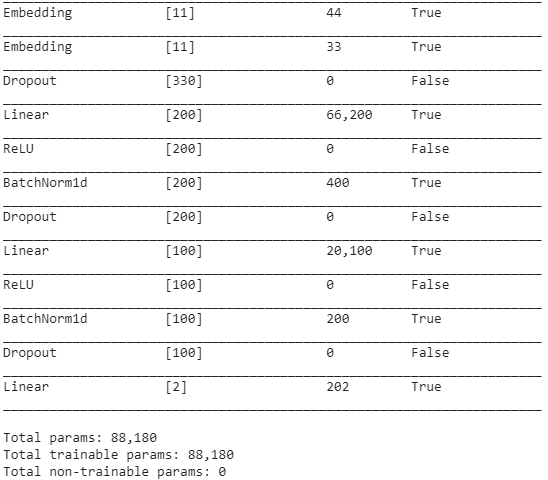

Hello all. Below is a snap of my model (TabularModel) summary:

I am not sure why the Dropout layers are set to False and the ReLU activations as well. The code I am using to create the tabular_learner is:

learn = tabular_learner(databunch, layers=[200,100], emb_szs=embedding_dict, metrics=accuracy, ps=0.1, emb_drop=0.1,

callback_fns=[partial(EarlyStoppingCallback,

monitor='accuracy', min_delta=0.01, patience=3)]).to_fp16()

learn.fit_one_cycle(100, slice(1e-02))

Am I missing something here?