Thanks for the response. I tried to mount the Google drive as you advised for the wget to copy files to Google Drive but the files are still getting copied into the Google Colab storage which doesn’t have enough space.

I then copied these files to Google Drive which tool huge time due to my bandwidth issues. But now Fast AI code raises the following error:

---------------------------------------------------------------------------

OSError Traceback (most recent call last)

<ipython-input-7-a512acdad9ad> in <module>()

----> 1 data = (src.transform(tfms, size=128)

2 .databunch().normalize(imagenet_stats))

/usr/local/lib/python3.6/dist-packages/fastai/data_block.py in transform(self, tfms, **kwargs)

488 if not tfms: tfms=(None,None)

489 assert is_listy(tfms) and len(tfms) == 2, "Please pass a list of two lists of transforms (train and valid)."

--> 490 self.train.transform(tfms[0], **kwargs)

491 self.valid.transform(tfms[1], **kwargs)

492 if self.test: self.test.transform(tfms[1], **kwargs)

/usr/local/lib/python3.6/dist-packages/fastai/data_block.py in transform(self, tfms, tfm_y, **kwargs)

704 def transform(self, tfms:TfmList, tfm_y:bool=None, **kwargs):

705 "Set the `tfms` and `tfm_y` value to be applied to the inputs and targets."

--> 706 _check_kwargs(self.x, tfms, **kwargs)

707 if tfm_y is None: tfm_y = self.tfm_y

708 if tfm_y: _check_kwargs(self.y, tfms, **kwargs)

/usr/local/lib/python3.6/dist-packages/fastai/data_block.py in _check_kwargs(ds, tfms, **kwargs)

575 if (tfms is None or len(tfms) == 0) and len(kwargs) == 0: return

576 if len(ds.items) >= 1:

--> 577 x = ds[0]

578 try: x.apply_tfms(tfms, **kwargs)

579 except Exception as e:

/usr/local/lib/python3.6/dist-packages/fastai/data_block.py in __getitem__(self, idxs)

107 def __getitem__(self,idxs:int)->Any:

108 idxs = try_int(idxs)

--> 109 if isinstance(idxs, Integral): return self.get(idxs)

110 else: return self.new(self.items[idxs], inner_df=index_row(self.inner_df, idxs))

111

/usr/local/lib/python3.6/dist-packages/fastai/vision/data.py in get(self, i)

269 def get(self, i):

270 fn = super().get(i)

--> 271 res = self.open(fn)

272 self.sizes[i] = res.size

273 return res

/usr/local/lib/python3.6/dist-packages/fastai/vision/data.py in open(self, fn)

265 def open(self, fn):

266 "Open image in `fn`, subclass and overwrite for custom behavior."

--> 267 return open_image(fn, convert_mode=self.convert_mode, after_open=self.after_open)

268

269 def get(self, i):

/usr/local/lib/python3.6/dist-packages/fastai/vision/image.py in open_image(fn, div, convert_mode, cls, after_open)

391 with warnings.catch_warnings():

392 warnings.simplefilter("ignore", UserWarning) # EXIF warning from TiffPlugin

--> 393 x = PIL.Image.open(fn).convert(convert_mode)

394 if after_open: x = after_open(x)

395 x = pil2tensor(x,np.float32)

/usr/local/lib/python3.6/dist-packages/PIL/Image.py in open(fp, mode)

2408

2409 if filename:

-> 2410 fp = builtins.open(filename, "rb")

2411 exclusive_fp = True

2412

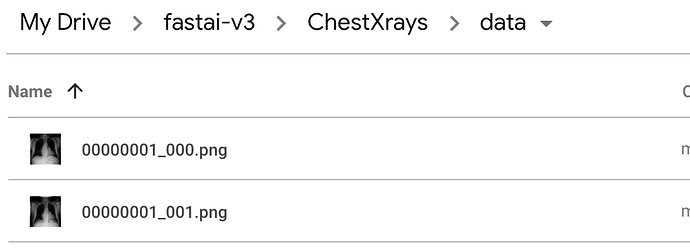

OSError: [Errno 5] Input/output error: '/content/gdrive/My Drive/fastai-v3/ChestXrays/data/00000001_000.png'

When I check Google drive for the image files it exists there but the Fast AI library can’t see that.

The following code runs fine.

drive.mount('/content/gdrive', force_remount=True)

root_dir = "/content/gdrive/My Drive/fastai-v3/ChestXrays/"

tfms = get_transforms(max_lighting=0.1, max_zoom=1.05, max_warp=0.)

np.random.seed(42)

src = (ImageList.from_csv(root_dir, 'images_metadata.csv', folder='data')

.split_by_rand_pct(0.2)

.label_from_df(label_delim='|'))

The error raises at the following command:

data = (src.transform(tfms, size=128)

.databunch().normalize(imagenet_stats))

Am super stuck. Any insights of what I am doing wrong.

Thanks.