Hi all! Just getting started with fast.ai and have enjoyed it tremendously so far.

One of the first challenges when trying to train my own model is loading a training data set onto my google compute instance. I was trying to download a large (5GB+) dataset from Kaggle, but Kaggle does not allow direct url to files (eg. where I could use download_data(url))

One option is to use scp to transfer local files to remote (and vice versa). Example command for newbies like me:

gcloud compute scp [local_path] jupyter@[instancename]:~/[destination_in_home_folder]

The problem is my upload is slow. Also just feels like a waste of bandwidth, and disk space to download to my computer just so I can upload to my instance.

Luckily, Kaggle has an official API (replaced the unofficial kaggle-cli tool) that you can use to download data directly from your instance shell. There are other posts touching on the API, but I didn’t see any for downloading datasets to a cloud instance. Seemed like it would be a common enough situation that it would be worth posting a quick guide in case it saves people trouble.

Using these steps, I downloaded this 5GB dataset to my instance in <5 minutes, where it would have taken me >2hrs to download then re-upload (almost made up for the time it took me to figure this out). Hope it’s helpful!

Use Kaggle API to Download Data Directly to Cloud Instance

-

Install Kaggle: SSH into your instance, and

pip install kaggle(instructions here, but it should just work) -

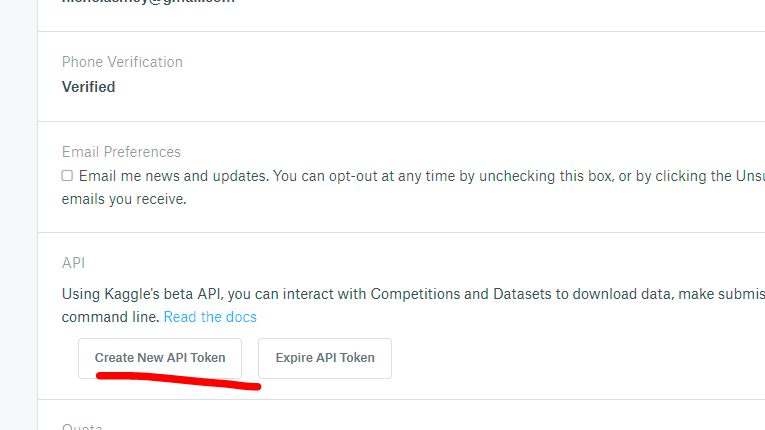

Get API Credentials All API requests need credentials to identify yourself. Just go to https://www.kaggle.com/[kaggle_username]/account, scroll down and click “Create API Token”. It will download kaggle.json with your username & authkey.

- Load Credentials on Instance You need to put kaggle.json into /home/jupyter/.kaggle. You can use scp for this (see above). That’s it. Now you can use all the kaggle api commands.

-

Download your dataset You can just use this command:

kaggle datasets download -d [dataset_identifier] -p *your_destination_path*

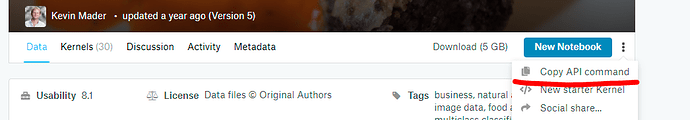

To getdataset_identifier, you can just browse to the dataset you want on the kaggle website. There’s actually a button that helpfully gives you the API command directly.

Take a look through the docs as the API has several useful features (example).