Hey folks,

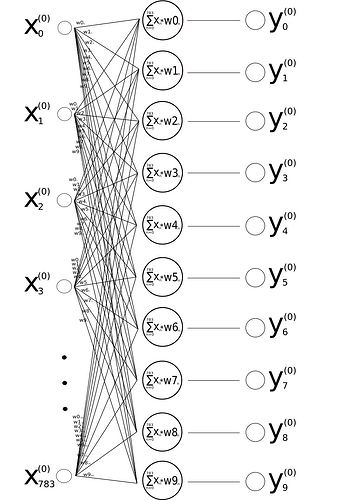

Assume we’d train a neural network like this …

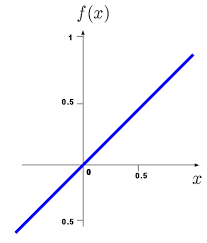

that gets trained doing a MNIST classification task. Assume the neurons are using linear actiavtions without any hidden layers just like in the above image.

Now my question: If we’d have a linear model like this and the first output vector for y would be

(0, 0, 0, 7, 0, 0, 0, 0, 0, 0 )

If we’d sum that vector up it would be 7. But if the target for training was 7, too but like this

(0, 0, 0, 0, 0, 0, 0, 7, 0, 0)

then the sums would be equal (7=7) and the loss would be zero although the first vector would have placed that value in the wrong position.

So to calculate the loss would we first sum up and then take the difference? Or first take the difference and then sum it up? If we’d take the difference first in this case it would be

(0,0,0,0,7,0,0,0,-7,0,0) Σ = 0

so suming it up afterwards would result in zero loss, too … That seems to be very conflicting … how does a MLP not confuse these calculations randomly?

Can someone explain to me how a linear neural network operates in that sense? From the perspective of loss calculation?

Can a linear neural network have negative values for the activations of its output neurons or its weights, too? In that case I assume it would be highly unlike to have a 7 in a position of a 4, because some negative weights will always drag down the first traget numbers calculation? But I’m not 100% sure about all of the above considerations … Can someone explain this?