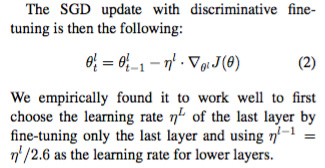

In the course we pass an array of learning rates to fine tune layers of different levels, but in Howard and Ruder (2018) it says

Is this newly employed method preferable to the former? Also, \eta^{l} is the learning rate we found by using lr_find() right?