Do you mean to ask if we still need a function that does just one notebook? Yes, definitely.

I made a new mem-utils branch with the starter code. (new code is in fastai/utils/mem.py)

Very good start. Just tested it on 2 different servers…

Now it also reports the memory available for the smaller dedicated graphics card (eg a 710) which is not a good candidate for running CUDA.

Could fastai.version include the date, eg 1.0.14.dev20181025 ?

@sgugger Any comments on this ![]()

@sgugger, both XXXs are where the function is called on a python file, not ipynb - which I don’t think exists anymore, is it? If it is can you update the example to reflect it? Thanks.

.dev0 just means it’s not a release. i.e. not reliable.

The date would be useless because 20181025 in one part of the world will not be 20181025 in another, so it’s not a good reference point. If you need to rely on an exact time stamp, you can always use git tools to see when the last commit was made in the fastai repo of your checkout.

Unless you meant 1.0.14.20181025 (no dev in your example), then your question is of a different nature.

Probably need to provide a way to exclude certain cards.

One way would be to tap into the existing CUDA_VISIBLE_DEVICES=“1,3” env var from pytorch. That way you can exclude the devices you don’t want to be reported. And it’ll work in fastai and pytorch (my code needs to be changed to include that).

Oh yup some of those are outdated. Good catch. I’ll take care of updating the gen_doc stuff!

(CC: @sgugger)

I have never used those functions or seen them before.

Cannot get Wideresnet to work even with pretrain=False:

learn = ConvLearner(data, models.wrn_22, metrics=error_rate, pretrained=False)

The order of problems:

-corrected wrn_22 to wrn_22(pretrained=False) because the new fastai version requires this argument

-corrected num_features so that it return 0 when i cannot find the attribute num_features. However that leads to problems later be in layers line 33 “layers.append(nn.Linear(n_in, n_out))”

what i missing her ?

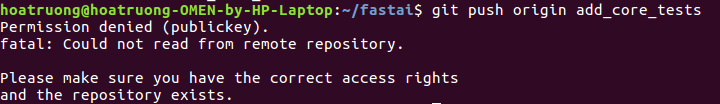

Can someone help me on git push problem ? I’m trying to write some test for fastai core. But when I push I get an error Permission denied (publickey).

Thank you in advance

@sgugger Hi,

After this commit I’m getting this error after learn.fit(1, 1e-2) :

/media/ssd32gb/Arquivos/MachineLearning/fastai-repo/fastai/basic_train.py in on_train_begin(self, pbar, metrics_names, **kwargs)

214 self.pbar = pbar

215 self.names = ['epoch', 'train_loss', 'valid_loss'] + metrics_names

--> 216 self.pbar.write(' '.join(self.names), table=True)

217 self.losses,self.val_losses,self.lrs,self.moms,self.metrics,self.nb_batches = [],[],[],[],[],[]

218

TypeError: write() got an unexpected keyword argument 'table'@dhoa if you’re wanting to create a PR, try using hub

You can’t push directly to our repo - if you want to save your work to github, you’ll need to make a fork.

Found a weird issue trying to use download_images in the course-v3/nbs/dl1/download_images notebook. It’ll fail silently most of the time and occaisonally throw a BrokenProcessPool error when trying to download images. I found a workaround: if you try to run the core function being called, download_url, download_images will start working again.

My guess is something to do with threading and ProcessPoolExecutor not being able to ‘touch’ download_url, or something. I don’t know how to fix that right now, so I opened an issue with a gist to replicate the bug and the workaround.

I just git pulled the fastai & course-v3 repos and re-did it to make sure.

Resize-Issue?

if I use DataBunch.fromsomething(), the size=xx parameter is completely ignored unless I have transformations specified. So how would I get simple resizing of my images without other transforms?

This may be by design (http://docs.fast.ai/vision.image.html#Be-smart-and-efficient) but it is very unintuitive as an api. If I can pass a size kwarg to the DataBunch method, this should not depend on the user also specifying some transformations I think. Suggestion: if “size” is present and ds_tfms is not, this should automatically generate a resize transform. What do you think?

PS: my workaround currently looks like this. Is there a better way of simply resizing?!

ds_tfms=([rotate(degrees=0, p=0.0)],[rotate(degrees=0, p=0.0)]), size=64`Is it ok if I add an assert at show_batch to check that the batch_size >= rows**2 ? I have just encountered this problem

Like below:

def show_batch(self:DataBunch, rows:int=None, figsize:Tuple[int,int]=(12,15), is_train:bool=True)->None:

assert self.train_dl.dl.batch_size >= rows**2 if is_train else self.valid_dl.dl.batch_size >= rows**2

show_image_batch(self.train_dl if is_train else self.valid_dl, self.classes,

denorm=getattr(self,'denorm',None), figsize=figsize, rows=rows)Careful, wrn22 is not supposed to be used in a ConvLearner. It’s our implementation for training on CIFAR-10, not a pretrained model.

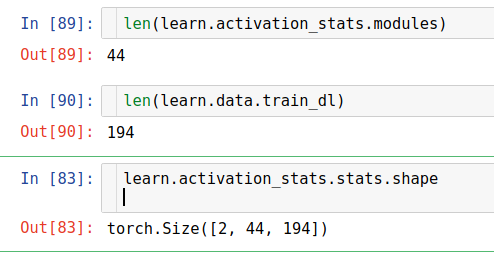

In docs.fast.ai / callbacks.hooks I think there is a disorder of learn.activation_stats.stats.shape

It indicates that:

The saved stats is a FloatTensor of shape (2,num_batches,num_modules) . The first axis is (mean,stdev) .

But it should be (2,num_modules,num_batches) right ?