You’re right, the training process almost work flawlessly if we simply return a Tuple, the only minor issue that happens here is when Recorder tries to accumulate validation losses here and calls find_bs on the tuple, but we can directly patch find_bs for that, so let’s forget about it.

The problems starts to appear on the “predict” methods. I think it’s going to be more useful if I just give you the problem instead of trying to explain it, so here is the first problem that happens on learn.get_preds:

dl = self.dls.test_dl([item], num_workers=0)

inp,preds,_,dec_preds = self.get_preds(dl=dl, with_input=True, with_decoded=True)

---------------------------------------------------------------------------

TypeError Traceback (most recent call last)

<ipython-input-20-4149556023ad> in <module>

1 dl = self.dls.test_dl([item], num_workers=0)

----> 2 inp,preds,_,dec_preds = self.get_preds(dl=dl, with_input=True, with_decoded=True)

~/git/fastai2/fastai2/learner.py in get_preds(self, ds_idx, dl, with_input, with_decoded, with_loss, act, inner, reorder, **kwargs)

235 res[pred_i] = act(res[pred_i])

236 if with_decoded: res.insert(pred_i+2, getattr(self.loss_func, 'decodes', noop)(res[pred_i]))

--> 237 if reorder and hasattr(dl, 'get_idxs'): res = nested_reorder(res, tensor(idxs).argsort())

238 return tuple(res)

239

~/git/fastai2/fastai2/torch_core.py in nested_reorder(t, idxs)

613 "Reorder all tensors in `t` using `idxs`"

614 if isinstance(t, (Tensor,L)): return t[idxs]

--> 615 elif is_listy(t): return type(t)(nested_reorder(t_, idxs) for t_ in t)

616 if t is None: return t

617 raise TypeError(f"Expected tensor, tuple, list or L but got {type(t)}")

~/git/fastai2/fastai2/torch_core.py in <genexpr>(.0)

613 "Reorder all tensors in `t` using `idxs`"

614 if isinstance(t, (Tensor,L)): return t[idxs]

--> 615 elif is_listy(t): return type(t)(nested_reorder(t_, idxs) for t_ in t)

616 if t is None: return t

617 raise TypeError(f"Expected tensor, tuple, list or L but got {type(t)}")

~/git/mantisshrimp/mantisshrimp/data/load.py in nested_reorder2(t, idxs)

83 if isinstance(t, Bucket):

84 return t[idxs]

---> 85 return _old_nested_reorder(t, idxs)

86 fastai2.torch_core.nested_reorder = nested_reorder2

~/git/fastai2/fastai2/torch_core.py in nested_reorder(t, idxs)

615 elif is_listy(t): return type(t)(nested_reorder(t_, idxs) for t_ in t)

616 if t is None: return t

--> 617 raise TypeError(f"Expected tensor, tuple, list or L but got {type(t)}")

618

619 # Cell

TypeError: Expected tensor, tuple, list or L but got <class 'dict'>

The main culprit of the problem here is is_listy.

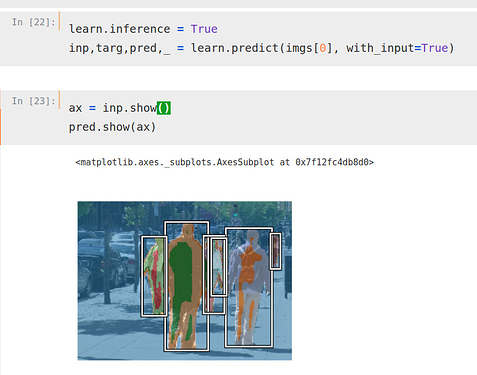

After this problem is solved, a further problem is going to be encountered inside learn.predict, here:

dec = self.dls.decode_batch(inp + tuplify(dec_preds))[0]

tuplify will ultimately use is_iter and our dec preds will not end up wrapped by a tuple, which is what we need.

This is why led me to this crazy Bucket solution, which required a lot of monkey-patching… I would love a simpler solution if you think it’s possible =)

.

.