I did the update of the requirements.txt (see samprepo ) of my Web app (and reexport my model using fastai 1.0.43) and it worked very well on Render.

I do not know how fastai Web apps are running on Render but it would be great to have a list ![]()

It was failing because fastai 1.0.43 no longer exists on PyPi (@sgugger do you know why?)

I’ve changed requirements.txt in the sample repo to always point to the latest version of fastai.

We made a hotfix, this version had a serious bug. You should look for 1.0.43.post1

I’ve updated the deployment guide to use this generator for Google Drive.

I have deployed my model on Render using the HTML template from the bears example but at the moment, I have to go to the webpage and manually upload an image myself.

Is there a way i can use POST and GET requests from a python script to upload the image and get the reply back?

Thanks in advance for any advice on this matter

Yes, you can use the requests library to send a POST request to the /analyze endpoint defined in the code and parse the JSON result.

I have been trying to do this and struggling with a CORS error. I have my own React front end that is posting the picture to the render api and awaits a response with the predictions and results. I played around with the middle ware options on the render side and nothing seems to make a difference.

I added:

app.add_middleware(CORSMiddleware, allow_origins=[’’], allow_methods=[’’], allow_headers=[’*’]

but it still returns 500 error - Reason: CORS header “Access-Control-Allow-Origin” missing

Any ideas…

Try settings allow_origins to [‘*’] as mentioned here: starlette/docs/middleware.md at master · encode/starlette · GitHub

Hey Anurag - thanks for the quick response.

Apologies, that was a typo on my end - I have set allow_origins=[’*’] in the CORSMiddleware and still get the error message.

Thanks very much for the help!

Would you be able to show me how i might structure the code to POST and parse the result if possible? Sorry, I’m new to both python and HTTP requests.

At the moment, using code i found online, I’m attempting to POST to /analyze but I’m getting a HTTP 500 code response.

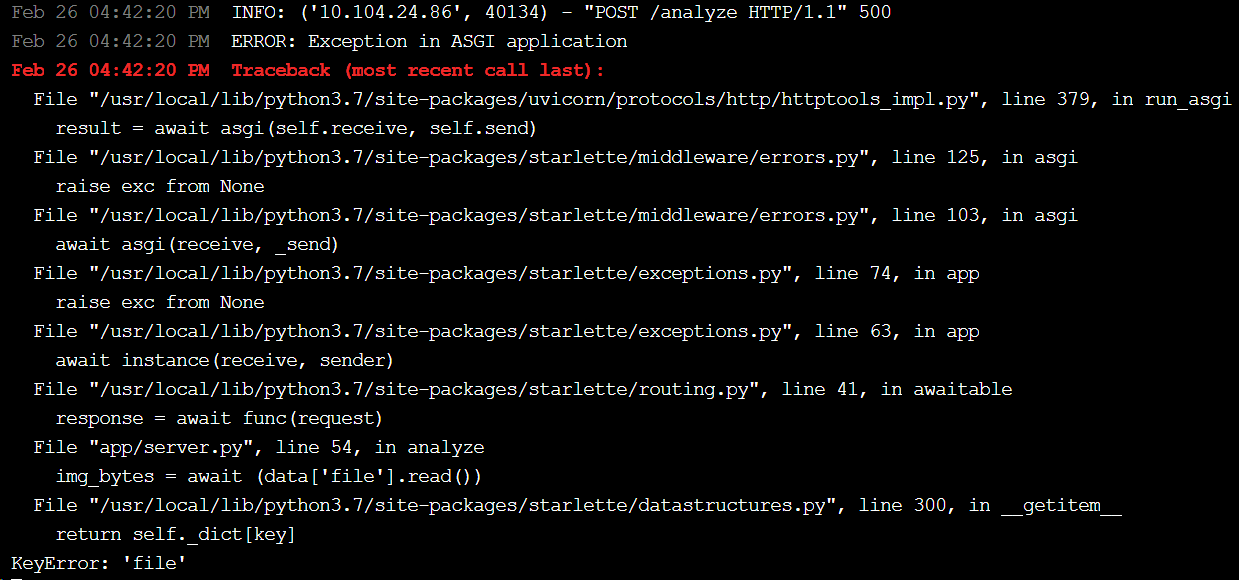

When i check the logs on my server side, i get this error message:

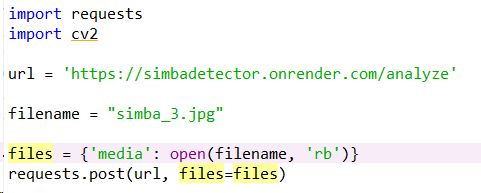

This is the python code that i am using but i don’t know if it’s correct:

I still have the same problem as @sgugger. How should I work on it ?

Thanks.

What is the actual value of the response header for Access-Control-Allow-Origin? You can look it up using Chrome inspector: https://developers.google.com/web/tools/chrome-devtools/network/#search

You have to send a POST request with the key file, as it says in the stacktrace:

KeyError: 'file'

What is the actual error? I don’t think @sgugger has any problems on Render ![]()

This is the error I have and saw in previous post:

Feb 27 03:43:10 PM Traceback (most recent call last):

File “app/server.py”, line 43, in

learn = loop.run_until_complete(asyncio.gather(*tasks))[0]

File “/usr/local/lib/python3.7/asyncio/base_events.py”, line 584, in run_until_complete

return future.result()

File “app/server.py”, line 31, in setup_learner

learn = load_learner(path, export_file_name)

File “/usr/local/lib/python3.7/site-packages/fastai/basic_train.py”, line 501, in load_learner

state = torch.load(Path(path)/fname, map_location=‘cpu’) if defaults.device == torch.device(‘cpu’) else torch.load(Path(path)/fname)

File “/usr/local/lib/python3.7/site-packages/torch/serialization.py”, line 368, in load

return _load(f, map_location, pickle_module)

File “/usr/local/lib/python3.7/site-packages/torch/serialization.py”, line 532, in _load

magic_number = pickle_module.load(f)

_pickle.UnpicklingError: invalid load key, ‘<’.

Feb 27 03:43:11 PM error building image: error building stage: waiting for process to exit: exit status 1

Feb 27 03:43:11 PM error: exit status 1

These errors are typically caused by a bad export of the PKL file, or a download URL that isn’t the raw download link. See discussions around creating the raw download link in this thread.

Hi @anurag,

When I tried to build the sample repo Docker image with the latest fastai library (currently 1.0.46), server.py fails to run, giving the following error:

Step 7/9 : RUN python app/server.py

—> Running in a972aa18622c

/usr/local/lib/python3.7/site-packages/torch/serialization.py:435: SourceChangeWarning: source code of class ‘torchvision.models.resnet.BasicBlock’ has changed. you can retrieve the original source code by accessing the object’s source attribute or settorch.nn.Module.dump_patches = Trueand use the patch tool to revert the changes.

warnings.warn(msg, SourceChangeWarning)

Traceback (most recent call last):

File “app/server.py”, line 46, in

learn = loop.run_until_complete(asyncio.gather(*tasks))[0]

File “/usr/local/lib/python3.7/asyncio/base_events.py”, line 584, in run_until_complete

return future.result()

File “app/server.py”, line 34, in setup_learner

learn = load_learner(path, export_file_name)

File “/usr/local/lib/python3.7/site-packages/fastai/basic_train.py”, line 549, in load_learner

state = torch.load(Path(path)/fname, map_location=‘cpu’) if defaults.device == torch.device(‘cpu’) else torch.load(Path(path)/fname)

File “/usr/local/lib/python3.7/site-packages/torch/serialization.py”, line 368, in load

return _load(f, map_location, pickle_module)

File “/usr/local/lib/python3.7/site-packages/torch/serialization.py”, line 542, in _load

result = unpickler.load()

AttributeError: Can’t get attribute ‘ImageItemList’ on <module ‘fastai.vision.data’ from ‘/usr/local/lib/python3.7/site-packages/fastai/vision/data.py’>

The command ‘/bin/sh -c python app/server.py’ returned a non-zero code: 1

I modified requirements.txt to get fastai==1.0.43.post1 instead, and that worked. Not sure whether some recent changes in ImageItemList caused this problem? Thanks.

Yijin

Oh i see where i was going wrong now, thanks for the help @anurag!

I have the image being posted now but could you suggest how i might do the parsing of the JSON response from the python script after the POST?

‘return JSONResponse({‘result’: str(prediction)})’

Nevermind @anurag, i actually just solved my problem there! Thanks so much for the help!

Seems like it’s just a change in exported data, between fastai 1.0.44 and 1.0.46 (1.0.45 was a duplicate of 44, so it was skipped). Confirmed here.

Thanks.

Yijin

It was this commit I believe: https://github.com/fastai/fastai/commit/fe5d4a65f02be706a8bcf3c12e98460656d5dab2