Hi Matt,

I am facing below issue in CW while deploying the model into Sagemaker

16:58:58

Creating DataBunch object

16:58:58

[2019-03-11 22:58:57 +0000] [30] [ERROR] Error handling request /ping

16:58:58

Traceback (most recent call last): File “/usr/local/lib/python3.6/dist-packages/sagemaker_containers/_functions.py”, line 85, in wrapper return fn(*args, **kwargs) File “/usr/local/lib/python3.6/dist-packages/food.py”, line 15, in model_fn empty_data = ImageDataBunch.load_empty(path) File “/usr/local/lib/python3.6/dist-packages/fastai/data_block.py”, line 715, in _databunch_load_empt

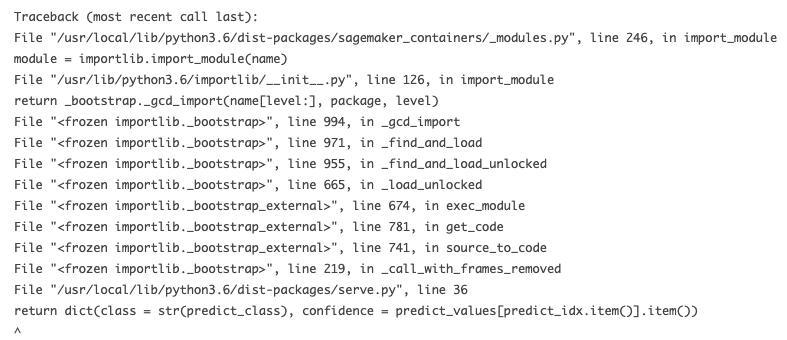

Traceback (most recent call last):

File “/usr/local/lib/python3.6/dist-packages/sagemaker_containers/_functions.py”, line 85, in wrapper

return fn(*args, **kwargs)

File “/usr/local/lib/python3.6/dist-packages/food.py”, line 15, in model_fn

empty_data = ImageDataBunch.load_empty(path)

File “/usr/local/lib/python3.6/dist-packages/fastai/data_block.py”, line 715, in _databunch_load_empty

sd = LabelLists.load_empty(path, fn=fname)

File “/usr/local/lib/python3.6/dist-packages/fastai/data_block.py”, line 563, in load_empty

return LabelLists.load_state(path, state)

File “/usr/local/lib/python3.6/dist-packages/fastai/data_block.py”, line 554, in load_state

train_ds = LabelList.load_state(path, state)

File “/usr/local/lib/python3.6/dist-packages/fastai/data_block.py”, line 664, in load_state

x = state[‘x_cls’]([], path=path, processor=state[‘x_proc’], ignore_empty=True)

16:58:58

KeyError: ‘x_cls’

16:58:58

During handling of the above exception, another exception occurred:

16:58:58

Traceback (most recent call last): File “/usr/local/lib/python3.6/dist-packages/gunicorn/workers/base_async.py”, line 56, in handle self.handle_request(listener_name, req, client, addr) File “/usr/local/lib/python3.6/dist-packages/gunicorn/workers/ggevent.py”, line 160, in handle_request addr) File “/usr/local/lib/python3.6/dist-packages/gunicorn/workers/base_async.py”, line 107, in

Traceback (most recent call last):

File “/usr/local/lib/python3.6/dist-packages/gunicorn/workers/base_async.py”, line 56, in handle

self.handle_request(listener_name, req, client, addr)

File “/usr/local/lib/python3.6/dist-packages/gunicorn/workers/ggevent.py”, line 160, in handle_request

addr)

File “/usr/local/lib/python3.6/dist-packages/gunicorn/workers/base_async.py”, line 107, in handle_request

respiter = self.wsgi(environ, resp.start_response)

File “/usr/local/lib/python3.6/dist-packages/sagemaker_pytorch_container/serving.py”, line 107, in main

user_module_transformer.initialize()

File “/usr/local/lib/python3.6/dist-packages/sagemaker_containers/_transformer.py”, line 157, in initialize

self._model = self._model_fn(_env.model_dir)

File “/usr/local/lib/python3.6/dist-packages/sagemaker_containers/_functions.py”, line 87, in wrapper

six.reraise(error_class, error_class(e), sys.exc_info()[2])

File “/usr/local/lib/python3.6/dist-packages/six.py”, line 692, in reraise

raise value.with_traceback(tb)

File “/usr/local/lib/python3.6/dist-packages/sagemaker_containers/_functions.py”, line 85, in wrapper

return fn(*args, **kwargs)

File “/usr/local/lib/python3.6/dist-packages/food.py”, line 15, in model_fn

16:58:58

10.32.0.2 - - [11/Mar/2019:22:58:57 +0000] “GET /ping HTTP/1.1” 500 141 “-” “AHC/2.0” empty_data = ImageDataBunch.load_empty(path) File “/usr/local/lib/python3.6/dist-packages/fastai/data_block.py”, line 715, in _databunch_load_empty sd = LabelLists.load_empty(path, fn=fname) File “/usr/local/lib/python3.6/dist-packages/fastai/data_block.py”, line 563, in load_empty return LabelLis

16:58:58

sagemaker_containers._errors.ClientError: ‘x_cls’