Please confirm you have the latest versions of fastai, fastcore, fastscript, and nbdev prior to reporting a bug (delete one): YES

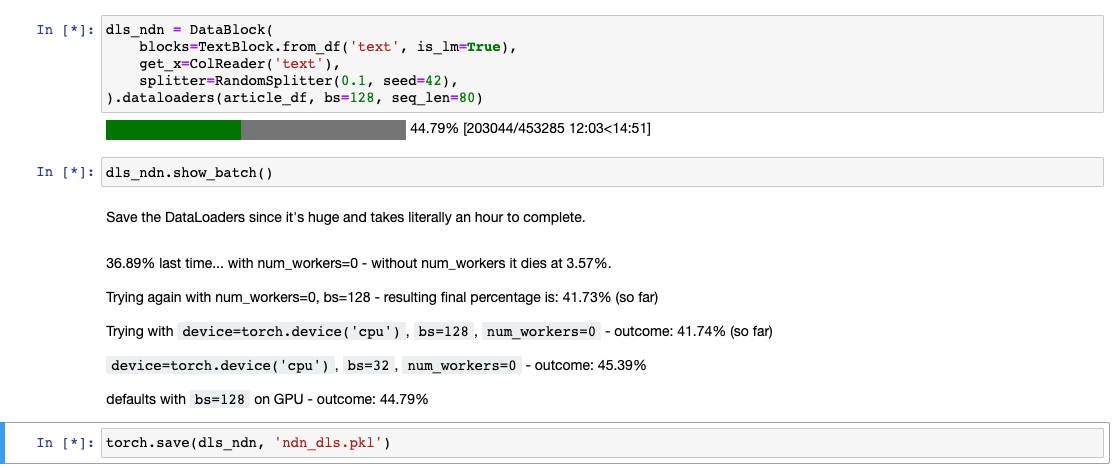

Hey guys, I have a large dataset of 400k text documents that I’m doing a language model on while reading Chapter 10 of the book.

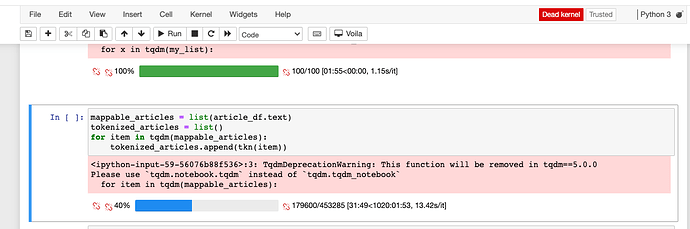

The DataLoader setup process always seems to hang at some point. When I use top at the beginning of loading, it shows 6-8 python processes. When I use top after the freeze it says there are some number of ‘zombie processes’ which I’m assuming have thrown an error but are not terminating.

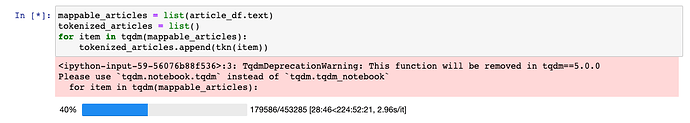

Is there a correct way to debug this sort of thing, something like a try/catch but for items in the DataLoader ? Currently I can’t get a stacktrace since it never exits. Maybe the tokenizer is hitting an error?

It looks like it’s not hanging completely, but at around 40% just grinds to an absolute slugcrawl - from 200 items/second to around 3. Then it jumps back and forth, then the kernel dies.