I’m working through the lesson 3 head pose notebook and trying to apply this lesson to detecting cars on the Lawrence Livermore National Labs (https://gdo152.llnl.gov/cowc/) dataset. I currently have the data residing in a directory which includes jpegs (256x256) with an associated text file that has the list of coordinates for a point on the center of the car. The following code, opens the text file, converts the coordinates to the image space, and then displays the image with the points on the cars:

##Get text file associated with image##

def img2txt_name(f):

return path/f'{str(f)[:-4]}.txt'

##Function to convert and extract coordinates of groundtruth (labels)##

def convert_xy(df):

df.columns=['class','x_coord','y_coord','ht','wt']

df['x_coord_conv'] = (df['x_coord']*256)

df['y_coord_conv'] = (df['y_coord']*256)

df = tensor(df['y_coord_conv'], df['x_coord_conv'])

return df

## return only the coordinate labels for the image ##

def get_car_features(f):

data = np.genfromtxt(img2txt_name(f))

data = pd.DataFrame(data)

if data.shape[1] == 1:

data = data.T

coords_df = convert_xy(data)

return coords_df.T

else:

coords_df = convert_xy(data)

return coords_df.T

ctr = get_car_features(fname)

img.show(y=get_ip(img, ctr), figsize=(10, 10))

This produces the right output:

However I am running into issues with the data block api. Here is the code snippet:

data = (PointsItemList.from_folder(path)

.split_by_rand_pct()

.label_from_func(get_car_features)

.transform(get_transforms(), tfm_y=True, size=(256,256))

.databunch().normalize(imagenet_stats)

)

Just like in the head pose notebook, I am using PointItemList and point to the folder with all the images & text files. I want to split by random percent and then use the function from above to open the text files and get the coordinates (labels in this case). The issue I am having is that the data block is opening both the jpegs and the text files which creates torch size mismatches - when I want it to create the data bunch with the images, and get the labels from the text files separately. What can I do to correct this issue? Should I move all text files to a different directory?

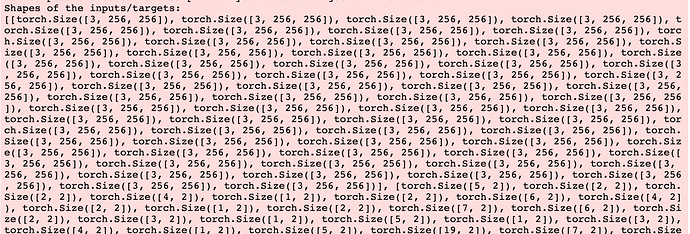

Image showing the output issue below (you can tell the images from the txt files in the torch size):

The current directory looks like this:

image1.jpg

image1.txt (structured as follows) <class, x_coord, y_coord, length, width>

image2.jpg

image2.txt…

Thanks in advance!