If we’re using the Keras’ ImageDataGenerator to augment the training set, should we also augment the validation set?

I was thinking about this today also. My thought is that as the validation data isn’t used for training, there probably isn’t any good reason to.

I suppose you could do data augmentation on the validation set if you think that you might get a fairer or more accurate measure of how well your model is really doing on unseen data if you augment it first. But this seems like a stretch to me. I’d be curious on Jeremy’s thoughts.

Not for validation. We’ll be discussing this tomorrow.

I was kinda running a thought experiment with some data augmentation.

Say we have a picture of a cat, and we augment it by skewing it a bit, then send it thru the network.

The network has just seen a skewed cat.

Say we DIDN’T do data augmentation – the network would just see a normal cat.

If data augmentation just changes our original image, and we don’t continue to use the original image, I don’t see the benefit. We haven’t augmented a datum, just replaced it with a slightly different version of same thing. Shouldn’t data augmentation be used in such a way that the network sees BOTH the regular cat, and the skewed cat? Like, bootstrapping our data to give the network more perspective?

Yes we should give it both - but since it’s randomized, it’ll see images close to the real cat too! (Although in my pre-computed augmented data, I explicitly append the original data, for just that reason)

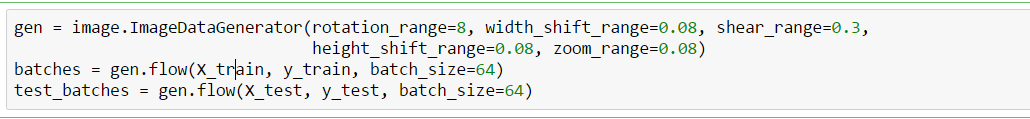

@jeremy: I was going through the code for data augmentation, and it wsn’t clear where we’re explicitly appending the data to the original images. Here’s the code from the MNIST notebook

. Is the gen.flow() appending the data internally as it gets generated?

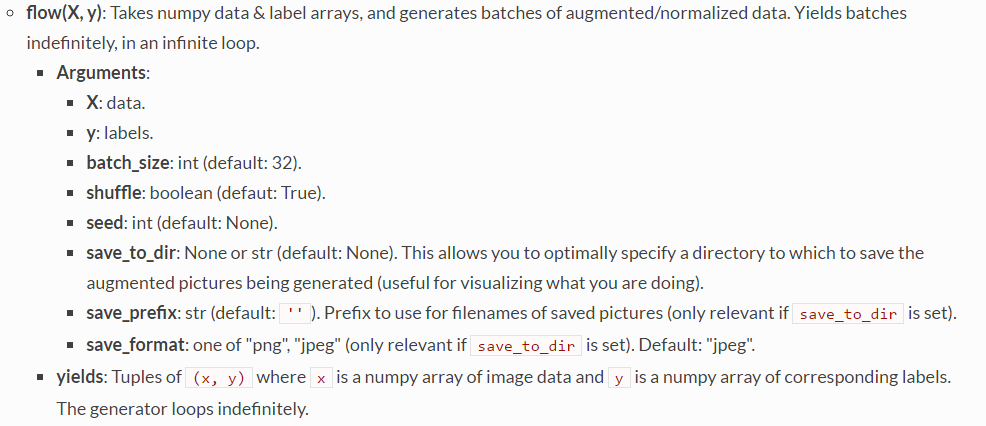

Here’s the documentation for gen.flow(), which doesn’t appear to be very clear about how many images are being appended.

There are 5 operations happening in the code shown above: rotation, width shift, shear, height shift and zoom. So there’s another question that arises: Do we get 1 image with all 5 operation applied or do we get 5 images with an operation applied once?

Here’s the keras documentation for reference:

It’s cooler than you’re imagining…  Rather than appending images, it is augmenting the on the fly. So each time it grabs a new batch from the generator, that batch is being randomly augmented.

Rather than appending images, it is augmenting the on the fly. So each time it grabs a new batch from the generator, that batch is being randomly augmented.

It is definitely cool that the augmentation is done on the fly!

But the question still remains – if I augment 10 images, and skew each one, has my number of images in my network just expanded to 20?

(And by extension, if I apply 5 augmentations to 10 images in Keras, do I have 10 or 20 or 50? The documentation in Keras leaves a lot to be desired.)

You also mentioned that you explicitly append the original data – can you point me to this code? It’s a bit confusing to me now (and I apologize!) – but you mentioned in your most recent answer, 'Rather than appending images… ’ So now I’m a little unsure what exactly is happening. By appending, I’m hoping we are both talking about appending new images to the dataset.

Thanks for your help.

We’re talking across each other still… The augmentation isn’t creating any new images. It is adjusting what’s coming out of the generator (flow(), or flow_from_directory()). The number of images that come in each batch from the generator is defined by the batch_size. The amount or type of augmentation doesn’t change this. Try it for yourself and see - create a generator, and call next() on it a few times, and look at the images you get back.

Is that more clear?

def get_batches(dirname, gen=image.ImageDataGenerator(), shuffle=False, batch_size=4, class_mode='categorical'):

return gen.flow_from_directory(dirname, target_size=(224,224),

class_mode=class_mode, shuffle=shuffle, batch_size=batch_size)

gen = image.ImageDataGenerator(horizontal_flip=True,dim_ordering='th')

batches = get_batches(path+'train',gen,shuffle=False, batch_size=1)

a_batch = batches.next()

plot(a_batch[0][0])

print(a_batch[1])

print(batches.total_batches_seen)

From the above little experiment, I see that flipping occurs randomly which means images that come out of the flow_from_directory can also contain original images i.e. images are not flipped. Since the next call can go on indefinitely, it is hard to figure out when to stop the iteration or to ensure that the model has seen all the images (including augmented). I was wondering if fit_generator or predict_generator automatically takes care of seeing all the images (including augmented)

Generally you would also have random rotations, shifts, etc - and since these are continuous variables, there’s literally no such thing as “all the images”.

By continuous variables do you mean for example say rotation=10, the images can get rotated in infinite number of degrees between 0 and 10 degrees and the imageDataGenerator randomly chooses any one of the infinite combinations?

Exactly!

Great! How does the fit_generator handle this? From nb_epoch?

Sorry I don’t understand the question - can you clarify?

oh … thats ok I think I figured that out. I was also thinking because the likelyhood of seeing the original image during the training process when using gen is quite rare we can probably do this to ensure original images are also trained upon.

model = Sequential([..])

train_batches = get_batches(path, ...)

model.fit_generator(train_batches)

gen = image.ImageDataGenerator(..)

train_batches_gen = get_batches(path, gen...)

model.fit_generator(train_batches_gen,..)

I am guessing the above might work since we are ensuring the original images are trained upon in addition to the augmented images. Thoughts?

You can try it - it’s something I tried in one of the statefarm notebooks, but I didn’t actually test the impact of it. I’m not sure that there’s necessarily any benefit to ensuring that totally unchanged images are included in the batches.

I do think it would be better if keras used normally distributed random numbers when doing the augmentation, rather than uniform - it would be a simple code change to do this in keras/preprocessing/image.py . That would mean most images would be more close to the original image on most dimensions most of the time.

@jeremy Recently came across the term “2X augmentation” of images, wondering what this means exactly as it was used by one of the Winners in this post:

I read that zca_whitening has had some good results. I get the following error when I use it:

output of generator should be a tuple (x, y, sample_weight) or (x, y). Found: None

Looks similar to this issue: "featurewise_center = True" is not working for ImageDataGenerator::flow_from_directory() · Issue #3679 · keras-team/keras · GitHub

Any one successfully used zca_whitening ?

I think you have to call data generator’s .fit() on the entire data set so that it’ll calculate the mean and std deviation and perhaps other statistics needed to do ZCA whitening.