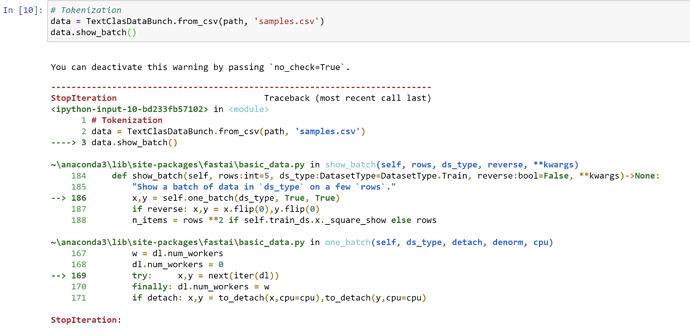

Hey everyone! I’m currently attempting some NLP and created a .csv file with 3 columns: ‘label’, ‘text’, and ‘is_validation’. I pd.read_csv the .csv file and am able to access the indices with the corresponding strings of text, when specifying ‘text’ as column. However, when I’m attempting to tokenize the .csv file (TextClasDataBunch.from_csv) and then enter data.show_batch() I get a ‘Stop Iteration’ error with no further explanation

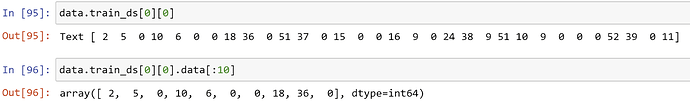

. I tried to interpret the Traceback but am unable to narrow down a solution, as my .csv file looks almost identical to the one used in Lesson 3 (the ‘texts.csv’ from the imdb_sample) and no issues occur there.Furthermore, if the data.show_batch() is omitted and data.train_ds[0][0] is called, there is no text output but only numbers (as opposed to text as in Lesson 3). Calling data.train_ds[0][0].data[:10] returns those same numbers but as a list. Seemingly, the text within the .csv does not get read-in correctly.

A few notes on the data set: it only has 31 labelled samples (‘none’, ‘valid’), with 10 being validation samples, and 21 training. I could imagine that this small sample size may have something to do with the error (i.e. sample too small to keep iterating over it, thus ‘stopping iteration’). However, collecting samples for this type of data is a pain, hence why I turned to forums before continuing to search for more samples.

Does anyone have an answer? A tutorial on how to create good .csv files for training would be much appreciated as well! Thanxx