If you do get a chance to look: the code below is my attempt at making slightly different versions of the from_csv which would create a valid dataset.

def from_df_ws(path:PathOrStr, df:pd.DataFrame, folder:PathOrStr='.', sep=None, valid_pct:float=0.2,

fn_col:IntsOrStrs=0, label_col:IntsOrStrs=1, suffix:str='',

**kwargs:Any)->'ImageDataBunch':

"Create from a `DataFrame` `df`."

"Split the data set"

df_train, df_valid = train_test_split(df, test_size=valid_pct, random_state=420)

"find all the stuff in valid thats not in train"

df_diff = df_valid[~df_valid["Id"].isin(df_train["Id"])]

"take that stuff out of valid"

df_valid = df_valid[~df_valid["Id"].isin(df_diff["Id"])]

train_iil = ImageItemList.from_df(df_train, path=path, folder=folder, suffix=suffix, cols=fn_col)

valid_iil = ImageItemList.from_df(df_valid, path=path, folder=folder, suffix=suffix, cols=fn_col)

src = (ItemLists(path, train_iil, valid_iil)

.label_from_df(sep=sep, cols=label_col))

return ImageDataBunch.create_from_ll(src, **kwargs)

def from_csv_ws(path:PathOrStr, folder:PathOrStr='.', sep=None, csv_labels:PathOrStr='labels.csv', valid_pct:float=0.2,

fn_col:int=0, label_col:int=1, suffix:str='',

header:Optional[Union[int,str]]='infer', **kwargs:Any)->'ImageDataBunch':

"Create from a csv file in `path/csv_labels`."

path = Path(path)

df = pd.read_csv(path/csv_labels, header=header)

return from_df_ws(path, df, folder=folder, sep=sep, valid_pct=valid_pct,

fn_col=fn_col, label_col=label_col, suffix=suffix, **kwargs)

bs=768

tfms = get_transforms(max_rotate=20, max_zoom=1.3, max_lighting=0.4, max_warp=0.4, p_affine=1., p_lighting=1.)

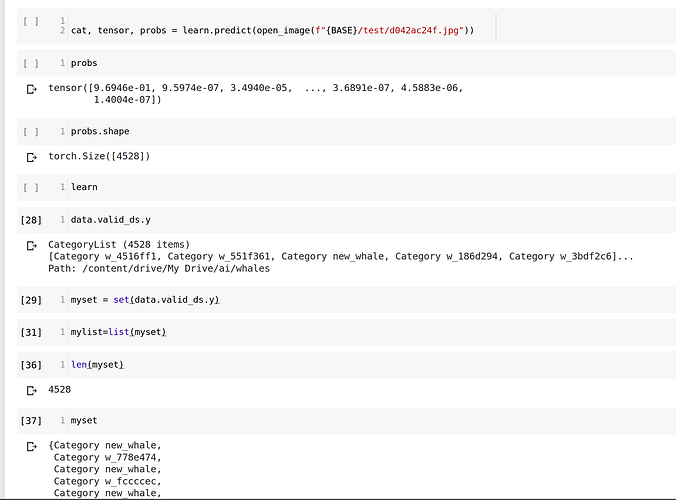

data = from_csv_ws(path=BASE, folder=f'train', csv_labels="train.csv", ds_tfms=tfms, bs=bs, size=sz)