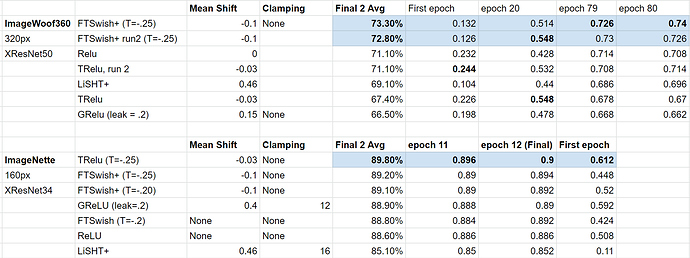

I finally finished a whole series of runs using ImageWoof and XResNet 50. Per Jeremy’s suggestion, this time I ran them for 80 epochs, and also recorded the results at epoch 20.

In order to try and strengthen the results, I ran the previous first (TRelu) and second place (FTSwish+) 2x.

The winner was handily FTSwish+ for ImageWoof (won both times run). FTSwish+ was in second place on ImageNette…so overall recommendation for image recognition seems to be FTSWish+.

As you can see, FTSWish+ was consistently in the top. Not shown on this chart is that I ran Relu once before and the results were pretty poor…but somehow that notebook got cleared of it’s results when I went back to record them, and I’m out of GPU credits at this point to re-run. As I recall it was closer to 66% or so that time, or close to GRelu basically.

In terms of computational efficiency, Relu was fastest averaging 2:01 per epoch. TRelu was 2:30, FastSwish+ was around 2:49.

Thus, there is a tradeoff of time for accuracy.

Code for FTSwish+ is here if you’d like to try it out:

I’m unclear why the slight bulge on positive values for FTSwish+ would enhance the results, but apparently it does. The whole point of TRelu was to remove that bulge while keeping the negative threshold, but it out-performed TRelu.

As an added note, the mean shifting (thanks to Jeremy and our course here) definitely improves the performance vs the original FTSwish.