For a project where we compare different collaborative filtering algorithms, I built a notebook using the CollabLearner from FastAI v1. The notebook can be found here.

I am wondering if I am understanding correctly how predictions should be made (I looked through the docs, and forum, but didn’t find much tangible info – maybe I missed it).

My main function for scoring is this:

def score(learner, userIds, movieIds, user_col, item_col, prediction_col, top_k=0):

"""score all users+movies provided and reduce to top_k items per user if top_k>0"""

u = learner.get_idx(userIds, is_item=False)

m = learner.get_idx(movieIds, is_item=True)

pred = learner.model.forward(u, m)

scores = pd.DataFrame({user_col: userIds, item_col:movieIds, prediction_col:pred})

scores = scores.sort_values([user_col,prediction_col],ascending=[True,False])

if top_k > 0:

top_scores = scores.groupby(user_col).head(top_k).reset_index(drop=True)

else:

top_scores = scores

return top_scores

- As can be seen, I am just calling pytorch’s model.forward() after mapping the external ids to the internal ids. I was expecting get_preds to do that for me, but since it is not overwritten by the class, it doesn’t work here. Is there a different method that should be used?

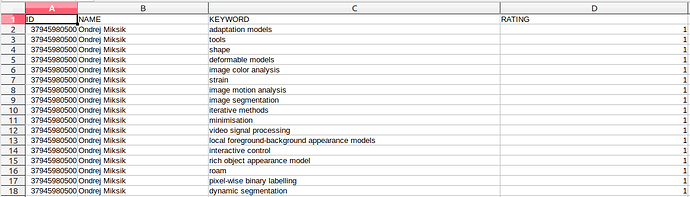

- To be able to calculate all our metrics, I need the train and validation sets, hence I pull them out of the learner I am using the below code, which seems quite complicated (and ends up being quite slow with larger datasets. Is there a more straight-forward way? Is the mapping from original dataset to train and valid accessible somehow?

# learn is an instance of CollabLearner

valid_df = pd.DataFrame({USER:[row.classes[USER][row.cats[0]] for row in learn.data.valid_ds.x],

ITEM:[row.classes[ITEM][row.cats[1]] for row in learn.data.valid_ds.x],

RATING: [row.obj for row in data.valid_ds.y]})

train_df = pd.DataFrame({USER:[row.classes[USER][row.cats[0]] for row in learn.data.train_ds.x],

ITEM:[row.classes[ITEM][row.cats[1]] for row in learn.data.train_ds.x],

RATING: [row.obj for row in data.train_ds.y]})

- In order to provide predictions for a set of users, I create a catesian product for those users with all the relevant items (all movies in the test set). With a large number of items, that will be a large list score. Is that the way to go or is there a smarter way?

Grateful for any input,

Daniel

, also read this entire thread and clicked all links before deciding to post.

, also read this entire thread and clicked all links before deciding to post.