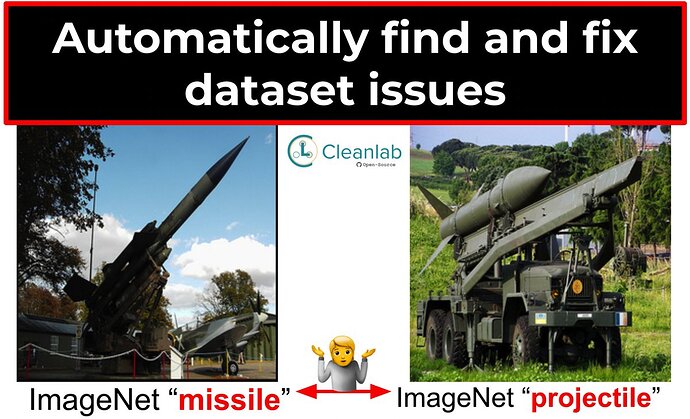

Hi folks, we just open-sourced the new cleanlab 2.0 Python package for automatically finding errors in datasets and machine learning/analytics with real-world, messy data and labels. tl;dr - cleanlab provides a framework to streamline data-centric AI.

After 1.0 launch last year, engineers used cleanlab at Google to clean and train robust models on speech data, at Amazon to estimate how often the Alexa device doesn’t wake, at Wells Fargo to train reliable financial prediction models, and at Microsoft, Tesla, Facebook, etc.

For those of you curating datasets, we recommend running them through cleanlab to check for potential issues. In particular for supervised learning projects, it’s critical to ensure your test data are actually correctly labeled! Cleanlab can also help you train more robust version of any model on noisy data; however we caution that if the test data suffer from noise as well, then you may not see big gains from cleanlab-training (in general, model evaluation should not be done with noisy test data).

Example Workflow (runnable code):

from cleanlab.classification import CleanLearning

# Cleanlab works with any model, fastai/PyTorch/TensorFlow/XGBoost/etc.

your_favorite_classifier = sklearn.ensemble.RandomForestClassifier()

cl = cleanlab.classification.CleanLearning(your_favorite_classifier)

# Cleanlab finds data and label issues in any dataset... in one line of code!

examples_w_issues = cl.find_label_issues(data, labels)

# Cleanlab trains a more robust version of your model with noisy data.

cl.fit(data, labels)

# Cleanlab estimates the predictions resulting from training on clean data.

cl.predict(test_data)

# Cleanlab quantifies class-level issues and overall quality of any dataset.

cleanlab.dataset.health_summary(labels, confident_joint=cl.confident_joint)

Official announcement blog (more details): https://cleanlab.ai/blog/cleanlab-2/

GitHub: https://github.com/cleanlab/cleanlab

Documentation: https://cleanlab.org/

NeurIPS talk: https://slideslive.com/38971637/finding-millions-of-label-errors-with-cleanlab

Use cleanlab to find issues in your tabular, text, image, or audio datasets!