I recently realized that “classifer free guidance” refers to the fact that it doesn’t need to run a classifier, or train against an adversarial classifier, in order to produce images meeting some criteria (the prompt). (Am I correct, that this is the meaning?)

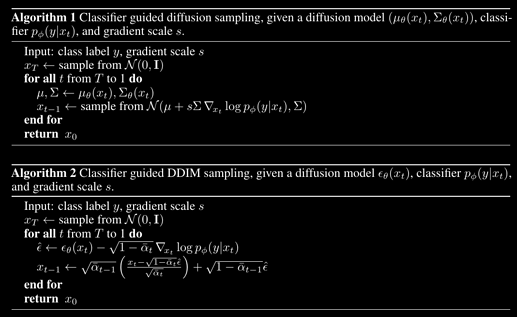

I also noticed that the text to image pipeline often produces bad quality output which a decent classifer could reject, such as the infamous 6-fingered contorted hands and other weirdness. Maybe there is a place for using classifiers to improve the quality of output, or to select only the best output images.

We need to sort out good generated images from bad anyway, so we could keep the bad ones too and train a classifier on them to do that selection process automatically, or to somehow assist in guiding the diffusion process.

I know that the resnet classifiers from early part 1 can classify a hundred or more images per second on my modest 8GB GPU, so it seems to me that using a classifier need not slow things down significantly. We need not run it at every step. If we predict the final image every 10 steps and run a “bizarre vs realistic” classifier on it, this might help to avoid terrible outputs and save time. For example I reckon we could fine-tune a classifier to reject 6-fingered hands, five legged horses, and other weirdness.

If we can detect the region where the weirdness is occuring, either with a bounding box or “heat map”, we could potentially tweak the latents in that region to make it produce something different. I’m not too clever with optimizers and SGD yet, but guess we could somehow bump the latents in the direction needed to reduce the loss on the classifier. Not sure if that could save time compared to trying again with a different seed.

A state of the art 5-legged horse from SD1.5, prompt: “man riding a horse”.