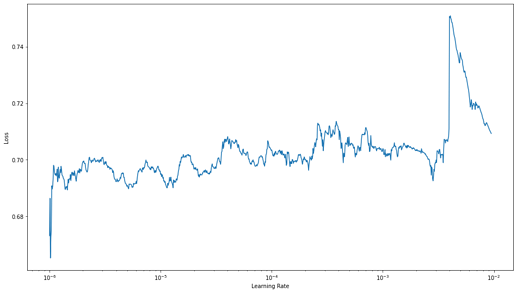

I have a model with an incredibly noisy gradient, how can I find the best learning rate here?

What is your batch size?

my batch size is 8

That’s probably too low. With a small batch size, the gradient update will vary a lot from batch to batch. You see the same issue here. You probably want a higher batch size of 32 or 64.

2 Likes

Use GradientAccum callback to accumulate gradients. Just remember that it only accumulates gradients. So, if your model use batch normalization layers, a batch size of 8 could be too low. You can change batch norm layers for another type as instance batch norm, etc or try to increase your batch size using mixed precision training, inplace operations or a smaller network,

2 Likes