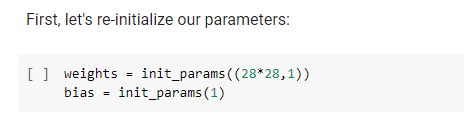

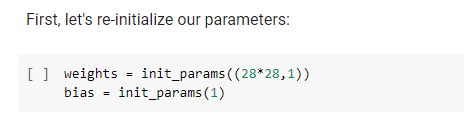

In the “Putting together” part in Chapter 4, I wonder why weights and bias don’t have requires_grad_() but it can calculate gradient on loss. Can someone explain it to me?

hi, they have it. Just check the definition of init_params some cells above:

def init_params(size, std=1.0): return (torch.randn(size)*std).requires_grad_()