I have trained a ResNet50 architecture using about 80,000+ images of resolution 576px each (resized to 256px). It caused some problems during the DataLoading, but the overall training process was seamless.

However, I cannot plot a Confusion Matrix. Colab runtime is crashing every time showing a message saying that the runtime crashed after it ran out of RAM.

I am declaring the Learner object and loading the weights from a .pth file.

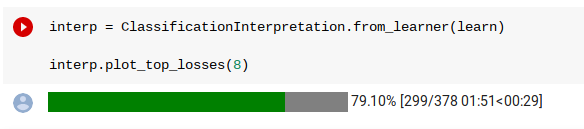

And then I run-

interp = ClassificationInterpretation.from_learner(learn)

interp.plot_top_losses(8)

This cell never runs successfully.

It is always stuck at about 79\% . And, yes, I am using a “High-RAM” instance.

Before the error is shown, it’s all normal like any other run of the plotting a CM.

Since Colab did not run into problems during the training but runs into problems during plotting the CM, I think this a problem from fastai rather than from Colab. Any advice on how to circumvent it?