Occasionally it’s useful to be able to apply a transform only to our inputs (x) and not apply the same transform to our outputs (y). For example, I would like to cut out squares from an image and have a model try to fill in the missing pieces.

My inputs would be the original image minus the cropped portions.

My outputs would be the original image.

I’ve created my databunch as follows where RandomCutout randomly drops pixels in an image:

databunch = data.databunch(pascal_path/'train',

bs=8,

item_tfms=[RandomResizedCrop(460, min_scale=0.75), RandomCutout()],

batch_tfms=[*aug_transforms(size=224, max_warp=0, max_rotate=0)])

# HACK: We're predicting pixel values, so we're just going to predict an output for each RGB channel

databunch.vocab = ['R', 'G', 'B']

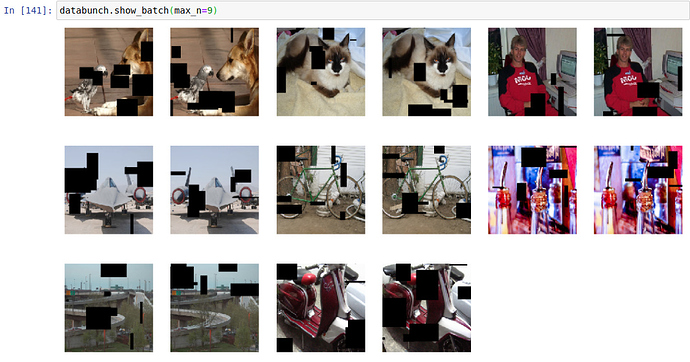

However, RandomCutout ends up being applied to both the x and y images, so I end up with batches that look like:

In fastai v1 we were able to restrict transforms by using the tfm_y=False parameter. Is there a corresponding approach in fastai v2?

See tfm_y here: https://docs.fast.ai/data_block.html#LabelList.transform