In lesson 6, where we are trying to predict 4th character based on previous 3 characters, we are creating 3 separate embedding layers with same dimensions. Now, let’s take character ‘a’ as an example in these words - able, lazy, dial where ‘a’ is present at different positions. Will ‘a’ have different embedding depending on it’s position (1st, 2nd, or 3rd place) in the input sequence?

Are you referring to Char3Model from https://github.com/fastai/fastai/blob/master/courses/dl1/lesson6-rnn.ipynb? In that case, no, ‘a’ will have the same embedding for all three positions: there’s only a single embedding layer, self.e = nn.Embedding(vocab_size, n_fac), which we use three times.

And I think in general, the “positionality” you’re talking about is handled by the structure of the RNN itself (via some hidden state that gets threaded along), not by the embeddings (which are just lookup tables).

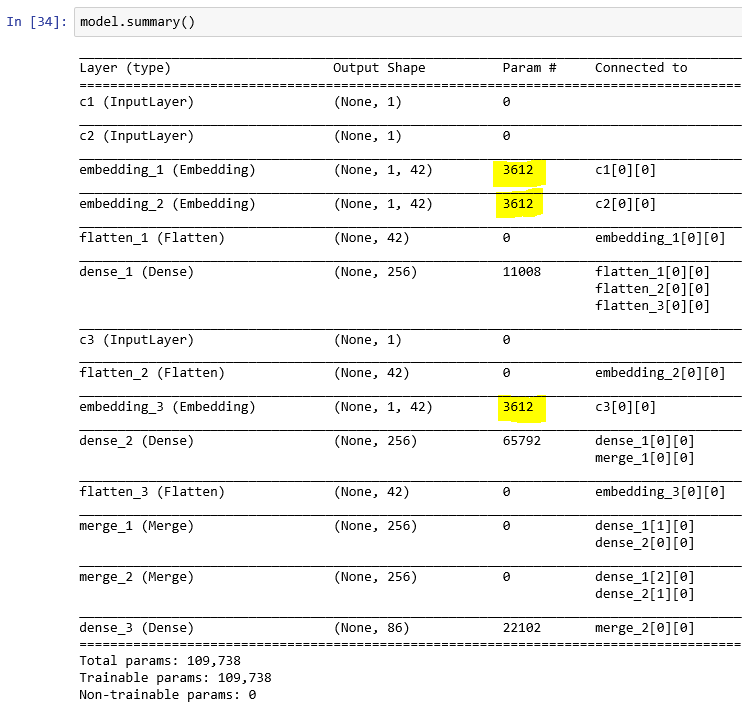

I am referring to “3 char model” in this one: [https://github.com/fastai/courses/blob/master/deeplearning1/nbs/lesson6.ipynb]

Ah, interesting. I haven’t looked at that version of the course, but yeah, it does look like there are separate embedding layers for each character position. Huh, not sure why it works that way, especially since a little further down in the “Our first RNN with keras!” section, there’s only one embedding layer? I’m not very familiar with Keras, so maybe I’m misreading something.