This is a topic for people interested in calibrating their networks to get better probability estimates in classification.

TL;DR: “Neural networks tend to output overconfident probabilities. Temperature scaling is a post-processing method that fixes it. Can we do temperature scaling within the fastAI framework?”

Motivation

Deep network are often overconfident in their predictions. Temperature scaling (TS) works really well to solve this problem and it can be performed as a simple post-processing step. This paper describes the problem of miscalibration, as well as the TS method in detail:

There’s also this video where one of the authors describes the contents of the paper really clearly:

https://vimeo.com/238242536

Implementation of TS in fastAI

I’m not sure how to perform TS on a pertained model within the fastAI framework. Theres is PyTorch code set the temperature in a pre-trained model here, which is a good start:

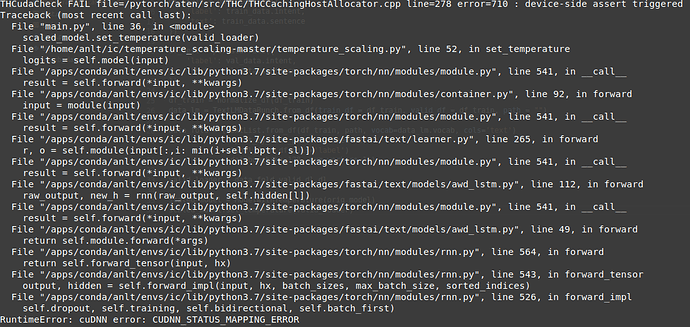

Here’s the code to run calibration on a pre-trained model:

from temperature_scaling import ModelWithTemperature

orig_model = ... # create an uncalibrated model somehow

valid_loader = ... # Create a DataLoader from the SAME VALIDATION SET used to train orig_model

scaled_model = ModelWithTemperature(orig_model)

scaled_model.set_temperature(valid_loader)

However, I’m not sure how to obtain the required valid_loader in the fastAI framework (without going ‘lower-level’ into full-on PyTorch).

For now I was using a standard high-level training setting in fastAI:

# Load data

data = ImageDataBunch.from_folder(path)

# Train

learn = cnn_learner(data, models.resnet34, metrics=accuracy)

learn.fit_one_cycle(10)

learn.save('model1')

As far as I can tell, the saved model1 is what we need as orig_model for TS. Anyone can think of a way to obtain valid_loader?

Thank you for taking the time to read this!