Hi there,

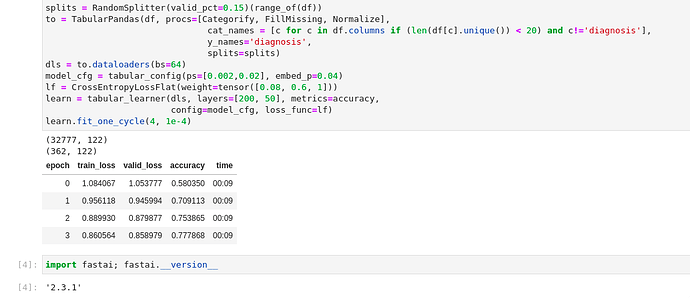

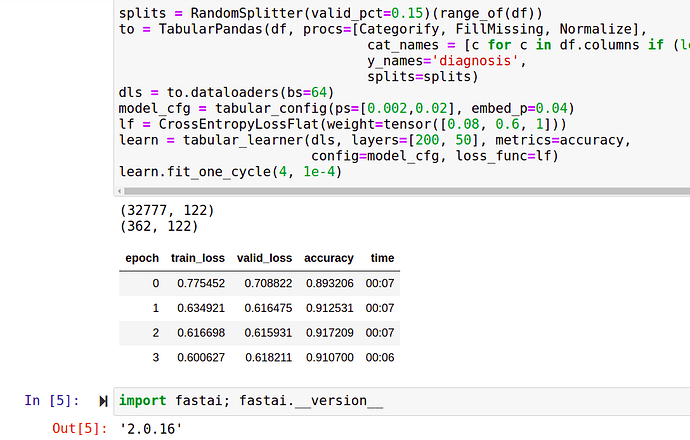

I’m training a tabular learner on a fairly simple dataset (can’t share because it’s medical data). On fastai v2.0.16 (and an older version I tried) it trains really well, getting >90% in an epoch or two. The exact same code on a system with fastai v2.3 does significantly worse (maxing out at 77%). It is consistent (not just a lucky split) and I can’t figure it out. Have there been changes to the way dropout is handled? I used to have the pass ps and emb_drop as arguments to tabular_learner, then on 2.0.16 I needed config=tabular_config(ps=[0.002,0.02], embed_p=0.04)… In the latest docs I can’t see any info about dropout at all.

The layer ordering was changed based on some experiments folks did. IIRC there should be a _first param in the config you can set, do the opposite of its default and that should help (can give a better answer when I’m not mobile, but it’s a place for you to start looking)

1 Like

Thanks (belatedly) for the tip. I found the lin_first param that defaults to True in tabular_config. Setting it to False brought things back in line with my experiments on older versions. I’m very glad you had that bit of info about the ordering change - thanks again.

1 Like