I am developing a device based on the NVIDIA Jetson Nano that will perform inference on 2 camera streams. The device needs to classify “Positive” or “Negative” for each image frame captured. I am getting great results from my test data collected in the lab using FastAI in the Nano. My problem, is that when I deploy a device in some “new” lab for testing, my accuracy tends to decrease quite a bit. The new settings always have vastly different environments ( industrial ) and so the model sometimes has a hard time adapting.

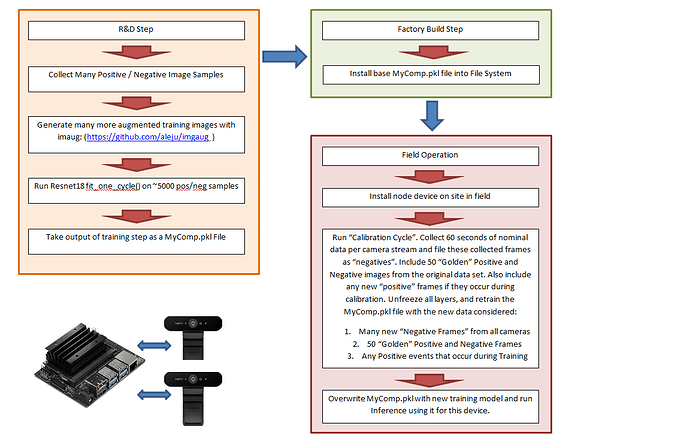

I tried to rectify this by allowing the device to transfer learn on top of the origin model it shipped with on the device with new data captured in the field after it is installed. It seems to work better, but I wonder if I am biasing my model too much. I am not sure how much data ( I arbitrarily chose 60 seconds of 9 Hz Captures … ) I should capture in the new setting to retrain the model with.

Can anyone provide feedback on how I’ve implemented this?

Does anyone have any general advice on how to allow the model to perform better in an unknown environment?

Thank you very much for the feedback.

Here is what I have implemented: