If you look under Community AMIs (navbar on the left) and search for ami-acac0ad5 it should come up.

Hi @jeremy, Just checking if the plan for providing an AMI is still on? Else, will try this or using an Amazon Ubuntu AMI. Using Crestle now to play around with the first lesson homework but looking to shift to Amazon.

Arjun

I updated the script - it’s previous version was installing tenorflow and not tensorflow-gpu which resulted in keras not using the GPU.

Torch is fine though as far as I can tell:

In [2]: torch.cuda.current_device()

Out[2]: 0

In [3]: torch.cuda.device(0)

Out[3]: <torch.cuda.device at 0x7f2132913c50>

In [4]: torch.cuda.device_count()

Out[4]: 1

In [5]: torch.cuda.get_device_name(0)

Out[5]: 'Tesla K80'

Yup. ![]()

I used your scripts. Had previously used the scripts from part1 v1 of this course as well.

Thanks @radek, pretty great stuff.

I managed to use the script to set up on p3.2xlarge on us-west-2 and I managed to run through all notebook 1. I created a public ami on us-west-2 ami-d55398ad for anyone who wishes to use.

Will Crestle work well for the entire course, or should we switch to AWS?

AWS keeps billing me for stuff I can’t find, so I’m considering terminating my account.

Crestle will be fine for whole course

It turns out that either pytorch doesn’t yet support CUDA9 / cudnn 7.7 or I was unable to find a way to set everything up. The notebooks ran but training was much slower than on CUDA8.

I updated the installation script so that it installs CUDA8 and installs pytorch using instructions on pytorch.org. This script also installs everything needed for running the notebooks. I tested it with the lesson1 notebook and both imports and training seems okay.

One good use case for this script is installation on a local box (this is how I plan to use it).

I also published a new ami for use on p2.xlarge (with CUDA9 gone this will no loner work on p3.2xlarge), however I would suggest using the official AMI for this course provided by @jeremy .

I checked on the PyTorch slack and I got feedback that everything should be working fine for CUDA9 and cudnn and v100. What problems are you seeing?

Everything compiles and works, but there are a lot of deprecation warnings breaking the UI of fastai (not a big deal at this point, they can be disabled or we could fix them). The real problem is that for reasons unknown to me, training of the initial model in lesson1 takes around twice as long as when using conda cuda8 along with the accompanying binaries.

Maybe it is not CUDA9 but the cudnn 7.0.3 I was using.

On the PyTorch forum there is some info about the PyTorch on v100 with CUDA 9 work-in-progress, although there is definitely no need to use that version for the course.

I am thinking that maybe the issue is that we are not using cudnn (didn’t check this), since the slowness I think might be with the convolutional part.

Anyhow, the point you make is exactly what I was thinking ![]() Can continue to sink time into getting this to work, but better spend it on doing the actual course work

Can continue to sink time into getting this to work, but better spend it on doing the actual course work ![]() So neat that pytorch devs provide us with all the necessary binaries for running this via conda with CUDA8

So neat that pytorch devs provide us with all the necessary binaries for running this via conda with CUDA8 ![]()

@radek Actually I was running lessons in CUDA 9/cudnn7 and p3.2xlarge. Except deprecation warnings I did not notice particular slowness. But I haven’t chance to compare it with CUDA8 yet. Where (which code) have you noticed biggest slowness? I am curious to try same and compare timings.

I noticed a difference when someone posted a screenshot to the forums of the timings of precomputing the activations for the first model. I think the difference was that on CUDA9/cudnn7 the training took twice as long. I then went ahead and switched to the CUDA8/pytroch binaries combo and got same results as the person in the screenshot.

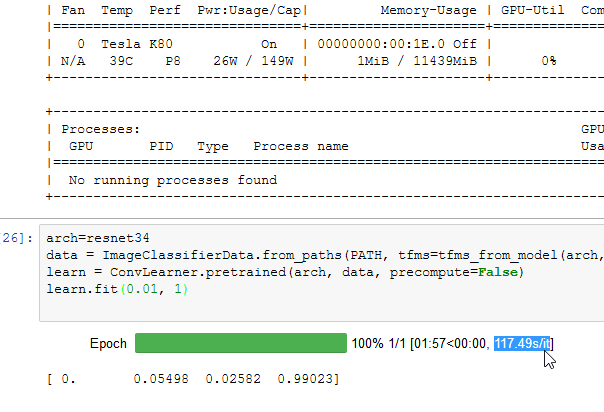

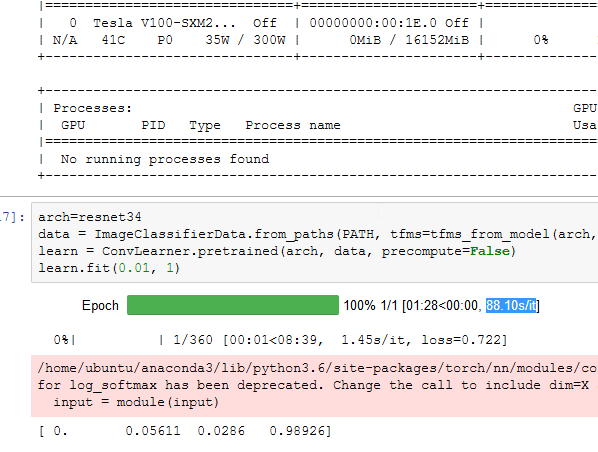

I think it was this screenshot that was helpful

Yeah, I ve got V100 slightly better on a longer run, but still wont worth the money.

this is p2xlarge:

this is p3.2xlarge:

Hi, Nvidia has built specialized software for leveraging AWS EC2 P3 instance power computing.

This is from the message they sent me as I have an account on Nvidia Developer:

You can now access performance-engineered deep learning frameworks with NVIDIA GPU Cloud (NGC) and the newly announced Amazon EC2 P3 instances with NVIDIA Tesla V100 GPUs. With Tesla V100s, you can train over 10X faster than Kepler GPUs.

For more information follow the link below and create an account for free to use the tools :

Hi @radek,

please may you possibly write a post about how to use your AMI based on spot instances as a step by step process like Jeremy did on the wiki lesson 2 (not fully detailed, but at least the steps): from choosing the AMI, setting configuration if applicable to launch and final setup for jupyter and so on…

Thank you so much.

Hi @iskode - please find setup instructions in the original post of this thread under the last section (Install script with AWS Spot instances with persistent storage).

I just updated the howto and this is what the end result looks like now:

I think initially it would be a good idea to follow the howto step for step, but once you start getting a hang of how AWS works it would be very straightforward to replace the AMI that you build in the howto with the official one from @jeremy. If you would need help on that please let me know. You can find the list of the official AMIs broken down by region in the Lesson 2 wiki.

Is there a way to know how much of the free credit I have used in amazon ec2.