Hi, I’m currently working on a project in which I’m trying to detect (segment) building footprints in aerial images and I already have a model that is working pretty well. For training the model, I have a dataset consisting of a number of images and their targets.

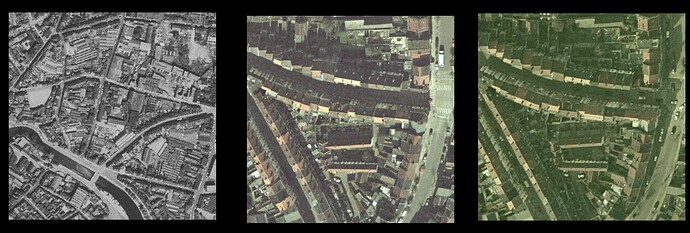

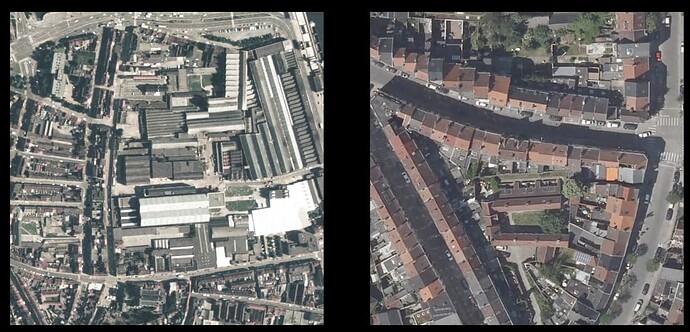

However, and this is where it gets a bit more interesting: I now want to apply this model to older aerial images of the same region for which I do not have the corresponding masks. These older images also have a different color distribution than the images in my training set & have a different resolution (meters/px). (see examples below)

I was hoping that by adding various augmentations to my training dataset, the model might be generic enough to work on these new datasets as well, but that does not seem to be the case. Transfer learning is an option, but without predefined masks I first have to create those manually, which would be quite a bit of work.

Are there any other possible solutions that I can take a look at?

Hello,

Augmentation should be beneficial; what policies are included in your data pipeline? Some I would recommend for your task are colour jittering, randomly grayscaling, slight Gaussian blurring, sporadically posterizing, adjusting the sharpness, and equalizing. Geometric transforms can be fruitful as well since you mention the meters/pixel distribution of the test set is different, particularly, random resized crop and affine transformations. You could experiment with different combinations and probabilities to see if these suggestions can be of help.

A more advanced potential solution would be to manually ensure your training dataset closely matches the tonal and colour distribution of the test set. That is, you could plot the image or colour histogram of your test set, and normalize the training data (similar to how ImageNet normalization is done, i.e., subtract and divide by some coefficients) so that its histogram would resemble that of the test set. Doing these computations in another colour space in addition to RGB, such as HSV, would also be wise. This would be an avenue worth exploring if you have the time.

Additionally, how about annotating a few samples of the test set? Like you say, it is an arduous job, but as few as 5-10 labelled examples might be sufficient. Few-shot segmentation is an active area of research, and there are numerous techniques you could attempt if you have a handful of ground truths.

Finally, assuming your unlabelled test set is large, self-supervised learning might be valuable. Specifically, you could train the backbone of your neural net using a self-supervised learning recipe like SimSiam or SwAV and freeze it during training. The advantage of this is that the encoder of your model would be robust to variations in input colour owing to its training procedure.

Please do not hesitate to reach out if you had other questions.

Apart from the great answer given above by BobMcDear,

How about running the model in inference mode? Assume that the first model you trained is now deployed in production and you want to use it to segment images it hasn’t seen. And then run it against the second dataset you want. I assume once you use augmentations (like the ones suggested above) it should be able to generalize to the second dataset.

It all depends on your end goal though, If you want to retrain the model with the new dataset I would go with the answer given above, if you just want masks for the 2nd dataset, you can run your model against them and generate the images and see if your model has generalized enough to be used for the same tasks in production.

@JelleVE whilst you did not say it explicitly your goal is building change detection? If so you can take a change detection approach using the pairs of registered images. As mentioned in the above comments, the use of augmentation will be crucial, but also just as important will be careful pre-processing of image pairs for registration and resolution if using a change detection approach. Since you already have the modern images annotated, creating additional annotations on the historical images should be relatively quick. For further references please see GitHub - robmarkcole/satellite-image-deep-learning: Resources for deep learning with satellite & aerial imagery

@jimmiemunyi that was the first approach that I had tried, but unfortunately with disappoint results. It might be that the augmentations that I was using were not very suitable (rotation, translation, flipping, regular dropout, channel dropout, blurring, grayscaling, color jitter, …) or not very well initialized argument-wise, so I’m not giving up on this yet.

@BobMcDear in addition to the augmentations, I tried transfer learning with a couple of 100 newly annotated samples (from the target dataset), but these results seemed subpar as well. I’ll be looking into your other suggestions as well. I’m not sure whether I really got the last suggestion, but it got me thinking: since I have aerial imagery of the same region for different points in time, do you think it would be interesting to create a Siamese network that is tasked with checking whether two images (from different datasets) are “identical” (not pixel-wise, but semantically) or not? This I would then use as the encoder for the unet. (Sorry if this does not make much sense, I’m somewhat out of my depth here.)

@robmarkcole yes, that pretty much is the goal indeed ![]() Thank you for the idea! I’ll be sure to check those references.

Thank you for the idea! I’ll be sure to check those references.

Creating additional annotations is indeed relatively quick. I have managed to annotate a fair number of images for one of the historical datasets, and for now, this gives the best results by far. I will (reluctantly) continue to do this for the other historical datasets as well, but I’ll be sure to try your suggestions and report back my findings!

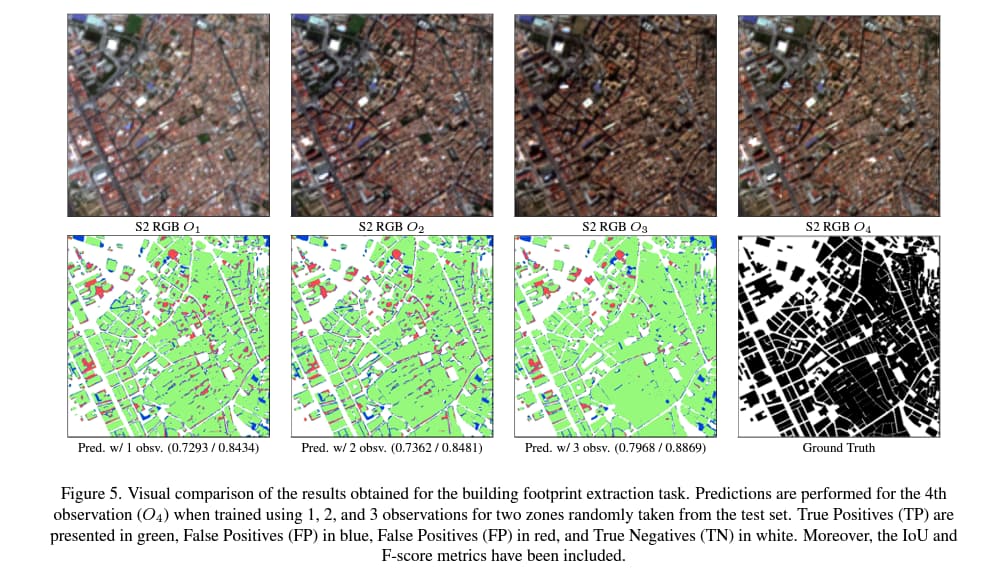

Photometric data augmentation techniques such as random brightness, contrast, saturation, and hue have been traditionally applied aiming at improving the generalization against color spectrum variations, but they can have a negative effect on the model due to their synthetic nature. However, if you have aerial imagery of the same region for different points in time you can realistically augment the dataset, keeping the same ground truth but randomly taking a different time step. This approach as we have demonstrated in “Multi-temporal Data Augmentation for High Frequency Satellite Imagery: a Case Study in Sentinel-1 and Sentinel-2 Building and Road Segmentation” (Link) makes the model robust against color spectrum variations.

Hello,

How are you performing training on the newly-annotated samples? For example, do you initially train the neural net on your larger dataset and then run it for some more epochs exclusively on your 100 samples? Also, is the entire network being updated or merely its decoder? It might be lucrative to test various configurations like mixing your 100 samples with the other dataset, freezing the encoder and training solely the decoder, etc.

Most networks can be divided into a couple of segments - a task-agnostic encoder that extracts visual features like rings or edges, and a decoder that receives information from the encoder to make predictions. In image classification, for instance, there is a linear layer (the decoder) that takes in the output of the network’s “body” (the encoder) for classifying the input. For segmentation, assuming your model is a UNet, the encoder is typically any CNN with a certain set of weights (e.g., ResNet-50 pre-trained on ImageNet ), and the decoder is a lightweight mirrored version of the encoder with a similar topology (I realize you are already aware of this; apologies for the superfluous description - I included it for potential future readers.).

My recommendation is to train your encoder with a self-supervised strategy on every sample in your dataset (old and new), regardless of whether it is accompanied by annotations, and freeze it during training (i.e., do not modify its parameters). That is, rather than training the entirety of your UNet (encoder plus decoder) or using a frozen encoder with, e.g., ImageNet-trained parameters, train your encoder in a self-supervised manner on the aerial images, but do not adjust its weights for the remainder of your application. Is that more clear?

That is a very clever idea. This would be identical to the suggestion above with one exception. Regularly, in non-contrastive self-supervised approaches, the pretext task is to ensure the model recognizes two different augmented views of the same data point as being semantically equivalent. With your proposed method, in lieu of augmenting photos for generating two views that must be predicted as corresponding to one another, an alternative is used, viz., the two views are the same sample from different points in time. In a sense, this can be seen as a more powerful form of augmentation compared to popular geometric and appearance transforms - it is temporal augmentation.

Is this helpful?

A very timely paper - https://www.mdpi.com/2072-4292/14/17/4254/htm

Highlighted ideas are generating pseudo labels, and annotating additional classes e.g. roads which can be extracted from openstreetmap