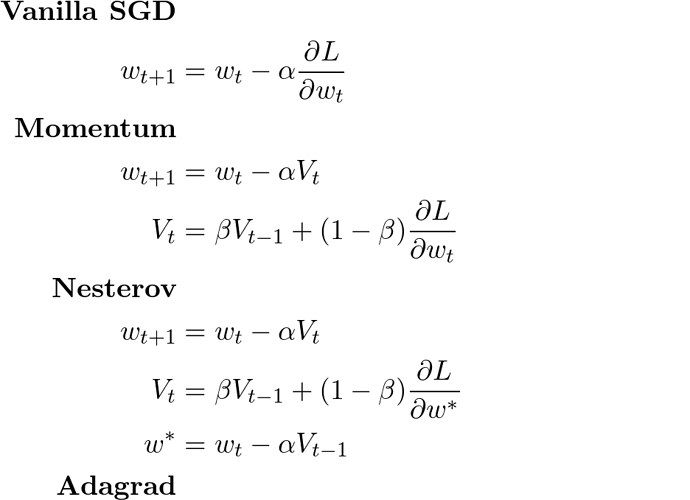

FYI I’ve just found what appears to be a pretty good survey of gradient descent optimizers. It’s written in fairly plain language and does a descent job of discussing differences and suitability for various applications. I have not finished reading it, but I was thrilled to find it and thought I’d share it ASAP.

1 Like

I also liked that page! Note that it is linked from the wiki’s SGD page already: http://wiki.fast.ai/index.php/Gradient_Descent#References

Excellent, glad it’s on the radar. I had the presence of mind to search the forum for mention of it, but didn’t think to search the wiki. Oh well, best laid plans…  Cheers.

Cheers.