I’ve greatly enjoyed making projects using CNNs, RNNs and GANs, however there’s a simpler-sounding problem I’m not sure how to approach using deep/machine learning/data science.

Let’s say we have data from the Magic Cake factory where we have 10,000 rows of info on their cake ingredients, and how each batch of cake turned out over the last year. They’re using flour, coconut, maple syrup and their own secret magic mix, which reduces the amount of calories in the cake so everyone can indulge to their hearts’ content.

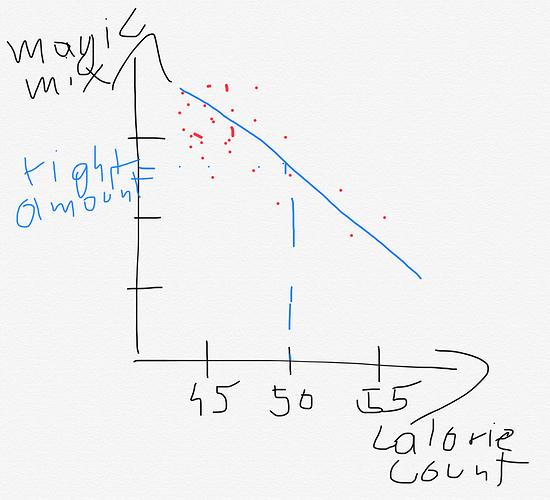

The magic mix is expensive, so ideally they want to use as little as necessary to reduce the calories to the goal level of around just 50 calories per cake (we all need some calories, after all). The data we have shows sometimes they add too much magic mix, resulting in 45 calories per cake, and sometimes they add too little, and end up with 55-60 calories per cake. Other times, they get it just right, and end up with 49-51 calories per cake.

Now, with this data, how would we optimize the model so that we predict the best amount of magic mix to use? The obvious approach to me was to use a linear regression and this is fine up to a point – we are after all fitting a line based on what the humans have done at the cake factory, so it will show those biases without necessarily getting to the point where we can enter the ingredients and cake size into the model and it will tell us the best amount of magic mix to use. Logistic regression seems a good way forward to see the importance of the other variables (perhaps creating classes based on whether the calorie count was accurate (1) or not (0), but may not come up with the best suggestions for amounts of magic mix.

I would love any suggestions.