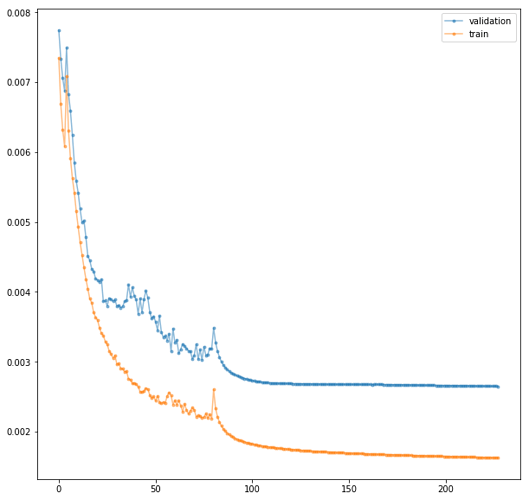

Below is a graph of the loss plotted vs number of epochs. I decreased the learning rate at epoch 45 and 80 by a factor of ten each time. After the second decrease there is a rapid improvement and then it flattens completely. I find it odd that it flattens so radically. Should I have waited longer to decrease the learning rate? What things should I be looking for before I decrease the learning rate?

Hey @Tank,

I recommend taking a look at https://sgugger.github.io/how-do-you-find-a-good-learning-rate.html and https://sgugger.github.io/the-1cycle-policy.html.

Sylvain has done a great job of explaining how to use the learning rate finder to find a good learning rate and how to apply it to the 1-cycle policy which significantly outperforms the stepwise lr annealing approach you’re using.

1 Like