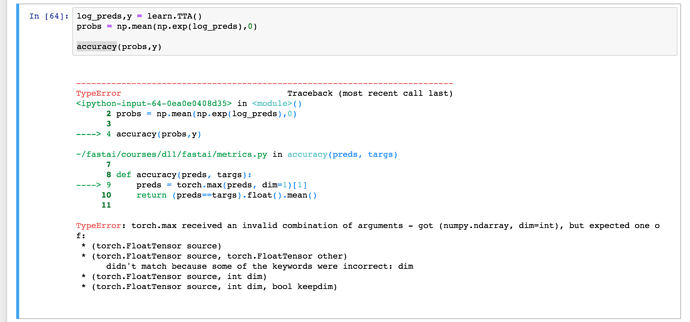

I am getting the above error after 85% completion.

I am using a different dataset than dogscat which has six classes. I was able to train and save the weights. Other analysis functions are running fine except the accuracy function. Any pointer would be helpful

Hi, @aloksaan

First, I’m curious about why np.mean is applied to np.exp(log_preds).

This problem is due to that PyTorch operations cannot handle numpy arrays directly, and there is a specific function torch.from_numpy.

I think the problem is that probs is calculated by np.mean(np.exp(log_preds), 0) in the In [64] cell (do we really need np.mean ?).

To make the cell work, use torch functions instead of numpy ones like this:

log_preds, y = learn.TTA()

probs = torch.mean(torch.exp(log_preds), 0)

accuracy(probs, y)

I think the accuracy function will work with log_preds and y because exp does not change the order of values in the array.

Thanks @crcrpar,

I have not modified the original code. I was only running the original notebook with a new dataset. Btw i tried torch.mean and now it throws

TypeError Traceback (most recent call last)

in ()

1 log_preds,y = learn.TTA()

2 #probs = np.mean(np.exp(log_preds),0)

----> 3 probs = torch.mean(torch.exp(log_preds), 0)

4 accuracy(probs,y)

TypeError: torch.exp received an invalid combination of arguments - got (numpy.ndarray), but expected (torch.FloatTensor source)

Sorry to bother you.

Then, there’s a need to convert preds to torch.tensor before pass it to accuracy function, because log_preds is numpy.ndarray whom torch.exp cannot handle.

How about this

log_preds, y = learn.TTA()

preds = np.mean(np.exp(log_preds), 0)

preds = torch.from_numpy(preds)

accuracy (preds, y)

Thank you. Really appreciate your quick response. We are supposed to use accuracy_np and not accuracy. The underneath metric.py was updated but the notebook never got updated. Here is the code snippet below

def accuracy_np(preds, targs):

preds = np.argmax(preds, 1)

return (preds==targs).mean()

def accuracy(preds, targs):

preds = torch.max(preds, dim=1)[1]

return (preds==targs).float().mean()

Glad to hear that and appreciate your sharing the snippet!