Hah, I’m a bit confused. I’d love to just pop the xs and ys into the loss func, but I think my sizes are off.

To be precise, next(iter(dls)) returns a TfmdDL object and not a batch. Even after I grab a batch, I can’t plug it into the CombinationLoss() straight away.

Here’s the code I’m running:

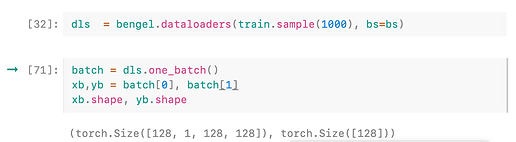

dls = bengel.dataloaders(train.sample(1000), bs=bs)

batch = dls.one_batch()

xb = batch[0]

yb = batch[1]

# xb.shape ==> torch.Size([128, 1, 128, 128])

# yb.shape ==> torch.Size([128]

I expected yb to be a list of 3 elements with size=128 each, or a stack of 3 tensors of size 128. Looking at the contents of yb, it appears to me that these are only the ground truths for grapheme and not vowel or constant.

If I try running this through CombinationLoss() as such:

loss_func = CombinationLoss()

loss_func(xb,yb)

I run into a ValueError: Expected input batch_size (1) to match target batch_size (128).

To take a step back, am I understanding CombinationLoss()'s forward correct? I’ve jotted my thoughs in the comments

def forward(self, xs, *ys, reduction='mean'):

# xb.shape ==> (batch_size,1,128,128)

# what shape/type should `ys` be?

# list of 3 tensors of size (batch_size, truths)?

for i, w, x, y in zip(range(len(xs)), self.w, xs, ys):

# this loop will run three times, calculate the loss for

# grapheme,vowel,consonant and sum them up

if i == 0: loss = w*self.func(x, y, reduction=reduction)

else: loss += w*self.func(x, y, reduction=reduction)

return loss

(pass said vocab to

(pass said vocab to

(but you may already know this one)

(but you may already know this one)