@foobar8675 our of curiosity, try using the dev versions of fastcore and fastai2 and see if you still get the same thing for me please

gladly - but is there a webpage you can point me to to get the dev versions in a notebook?

The FAQ has this.

https://forums.fast.ai/t/fastai-v2-faq-and-links-read-this-before-posting-please/53517/4

Well, I tried to atleast. There’s a bit of madness on campus so I wasn’t able to get as far as I wanted. I’ll keep everyone updated if I decide to do one in the middle of the week (as everything is closed down) but for the time being presume regular schedule of next week ![]()

So will the next lecture be on coming Saturday pacific time?

Yes unless I state otherwise

I was not able to reproduce it. Are you doing this on the server or locally?

oh ic. you are right. my last example had predict expecting a tuple of length 3, where this expected a tuple of length 2. thank you @muellerzr!

Pass in decode=True and your last one will be the class index’s ![]() (and you’ll get your 3)

(and you’ll get your 3)

@muellerzr So I know I asked this before but am still stumped. do you know of any tabular examples that only have continuous inputs (no categorical ones) with a y_block of CategoryBlock?

i’ve been trying to tweak 01 - Adults.ipynb but haven’t been able to get it working.

Can you describe the situation? I don’t think you should be using a CategoryBlock there

Sure, and I’m just trying to get this working on 01_Adults so I can apply it to the kaggle ion switching competition, which has 2 continuous fields and labels which are categories, 0-7.

I took 01_Adults, and am pretending the cat_names do not exist, only the cont_names. I have block_y set to CategoryBlock as it is currently, and am assuming it to be a binary classification. I’ve tried with procs Normalize and Normalize, FillMissing and get errors both ways.

I might be just misunderstanding, is the CategoryBlock not the right approach?

No it is, it’s binary (we could simply replace them with ‘yes’ and ‘no’ if we wanted to. This is the exact situation where you’d want to explicitly state CategoryBlock()). If you’re using FillMissing you should still be using Categorize as FillMissing will generate categorical columns

Hi everyone,

I’m using this dataset:

Novel Corona Virus 2019 Dataset

Day level information on covid-19 affected cases

and I’m having trouble understanding the data.

It has 3 separate time series data for ‘recovered’ , ‘infected’ and ‘dead’ and an overall summary data.

How do I combine these time series data to predict the fields in the summary data for the next month or so?

Please help me out.

Thanks,

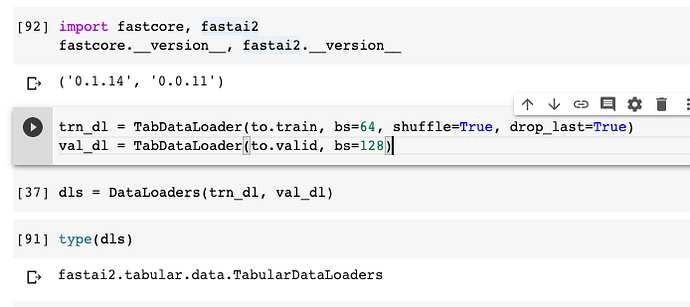

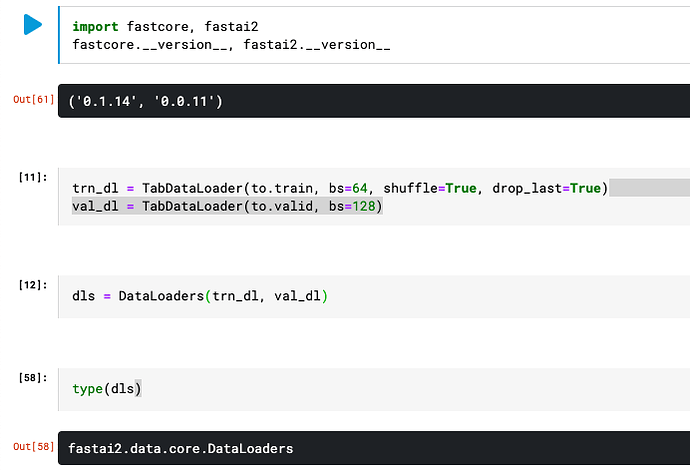

i see part of my problem. the behavior is different between kaggle and colab. i noticed this

colab:

kaggle:

i noticed this at first when digging into the inference code

dl = learn.dls.test_dl(df.iloc[:100])

the dls is a different type on colab and kaggle. sigh

That’s quite odd! I’d check How the other dependencies line up (torch, torchvision, pandas, etc)

All, the plan is on Thursday I will do lesson 2 (yes with lesson 3 then on Saturday) at the usual time. My apologies for being so off-kilter with the schedule, Caronavirus etc has been difficult to work with for the past week

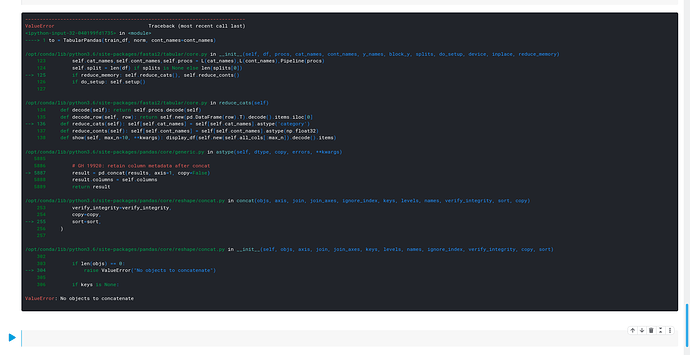

@muellerzr I was trying to apply the Adults.ipynb example to Kaggle Categorical Feature Encoding Challenge2. On normalising the continuous variables, I get the following error.

I am not sure if the columns I have specified matches the criteria of continuous variable for things like day and month. Am I wrong in specifying it as a category

It’s interesting to see that we are going to explore Rossman dataset with this lessons.

I had a question for you, I was planning to publish a few Notebooks in Kaggle with you, building on top of

some of the the notebooks like your Adult tabular notebook, image classification lesson 1. How should I cite you in the notebooks?

That’s a bug, I’ll get it fixed today. In the meantime do reduce_memory=False