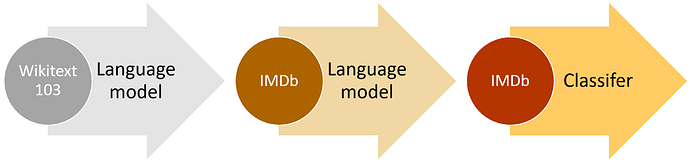

I’ve been looking at this tutorial and other applications of BERT in document summarisation and the latest Jigsaw challenge for toxic comment classification. One important point that I see in all of these applications is that the BERT language model is not being fine-tuned on the corpus under test. So if we follow the process outlined by the figure below, people are essentially jumping from Step 1 (getting the pre-trained language model) to Step 3 (fine-tuning the classification model).

If I look at the ablation studies in the original ULMFiT paper (Tables 6 and 7), it seems that there is 1%-2% to be gained in error performance by fine-tuning the language model on the corpus before fine-tuning the classification model. In looking through the code available in the pytorch-pretrained-bert package, it does not seem to include any process for fine-tuning the language model. There seems to be an expectation that you will take the model as is.