This is an interesting tutorial that I thought should be showcased over here. It integrates the huggingface library with the fastai library to fine-tune the BERT model, with an application on an old Kaggle competition.

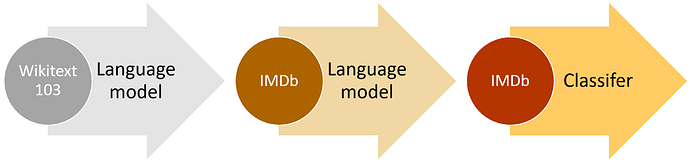

I’ve been looking at this tutorial and other applications of BERT in document summarisation and the latest Jigsaw challenge for toxic comment classification. One important point that I see in all of these applications is that the BERT language model is not being fine-tuned on the corpus under test. So if we follow the process outlined by the figure below, people are essentially jumping from Step 1 (getting the pre-trained language model) to Step 3 (fine-tuning the classification model).

If I look at the ablation studies in the original ULMFiT paper (Tables 6 and 7), it seems that there is 1%-2% to be gained in error performance by fine-tuning the language model on the corpus before fine-tuning the classification model. In looking through the code available in the pytorch-pretrained-bert package, it does not seem to include any process for fine-tuning the language model. There seems to be an expectation that you will take the model as is.

BERT paper mentions using the model for Transfer learning and only training the last layer as done in the above blog… this is the most popularly used method… details in below post…

-

BertForSequenceClassification- BERT Transformer with a sequence classification head on top (BERT Transformer is pre-trained , the sequence classification head is only initialized and has to be trained ),

Although you can also experiment training the LM on your corpus, think it needs a lot of GPU and training time… details in the below link

https://github.com/huggingface/pytorch-pretrained-BERT/tree/master/examples/lm_finetuning

Thanks for your response Sanjeeth. Yes I am aware of the recommendation for using BERT as is. You could do the same thing with ULMFiT, using the WikiText-103 model directly on you classification problem. My point was that the studies done in the ULMFiT paper by Jeremy and Sebastian Ruder show fairly significant gains by fine tuning the language model on the corpus under test. I haven’t seen similar things done with BERT so I am wondering if there are further performance gains to be made. It does take a lot of resources to do the fine tuning but is it worth it?

I wasn’t aware of the fine-tuning scripts in the HuggingFace repository, I will check that out.

let us know if fine tuning helps

@nsecord, you are absolutely right in pointing out the lack of fine-tuning on corpus under consideration. I’m not sure about the rest, but fast.ai allows you to fine-tune your language model on the target corpus prior to defining the model learner. You’ll find a very neat example under the text category of their tutorial section here.

Hope this helps. Cheers!

Hello @nsecord,

In this paper, more particularly in section 5.4, they confirm that fine-tuning the model with unsupervised masked language model and next sentence prediction tasks on the corpus under test (5.4.1 Within-Task Further Pre-Training) gives better performances. It corresponds to the BERT-ITPT-FiT models in the tables.

The original blog post seems to have disappeared from the web.

The last snapshot from the wayback machine is: A Tutorial to Fine-Tuning BERT with Fast AI | Machine Learning Explained

The referred notebook is: Practical_NLP_in_PyTorch/bert_with_fastai.ipynb at master · keitakurita/Practical_NLP_in_PyTorch · GitHub