Im reviewing SGD and I can not understand…

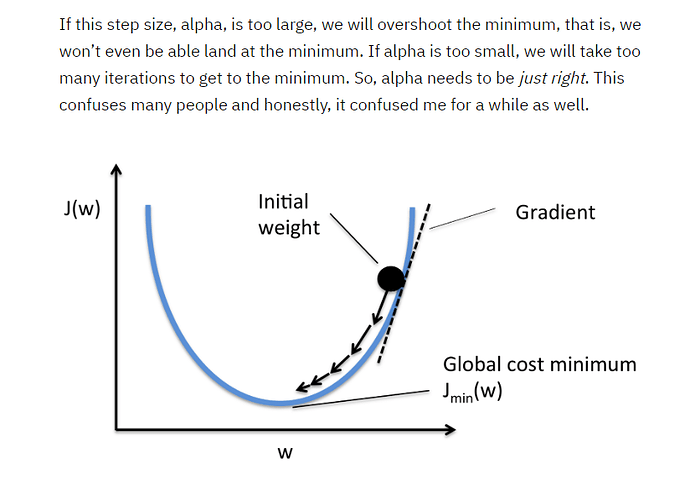

This subtracts the coefficients by (learning rate * gradient)…

he says : If I move the whole thing upwards, the loss goes down. so I want to do the opposite of the thing that makes it go up. We want our loss to be small. That’s why we subtract

Even so, what did he mean by moving everything upwards and having less loss?

def update():

y_hat = x@a

loss = mse(y_hat, y)

if t % 10 == 0: print (loss)

loss.backward()

with torch.no_grad():

a.sub_(lr * a.grad)