Hello,

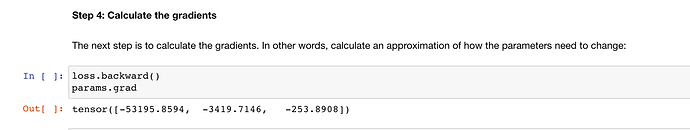

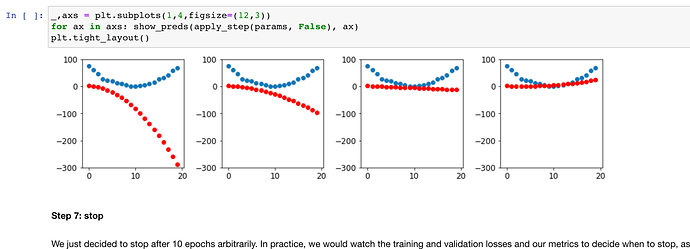

I’m going through the fastai book. I’ve setup a VM on Azure by following the official instructions ( https://course.fast.ai/start_azure_dsvm ) and install script. I’ve found a strange behaviour in the 04_mnist_basics notebook. From the saved notebook, in the end-to-end SGD example:

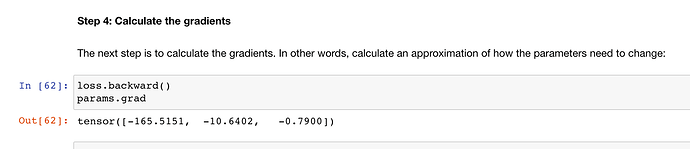

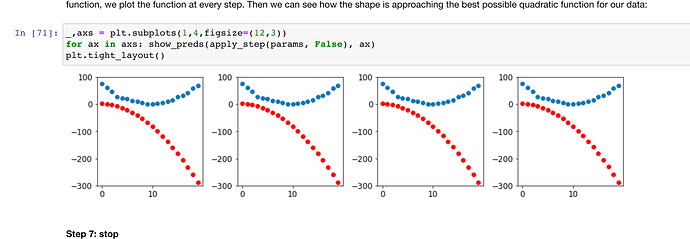

But, if I just restart and re-run the kernel:

It seems that the model is way slower at learning - something which is a bit expected, because the gradients that I see are much smaller than the ones that can be seen in the original notebook.

Of course, the initial parameters are random, so this COULD happen, but I was convinced that some setup part in fastai would set a seed, because the results are very repeatable on my system.

Any clue?