here’s my config: https://pcpartpicker.com/list/DZJLtJ … In addition I am using a K40 GPU

I just finished putting together my DL box a couple of days ago. I follow a simple process

- wget and run this file - this installs vim, copies all my dot files, etc, I use it on any new box I am setting up

- wget and run this file - this is a modified script from part1 v1 that includes everything for part1 v2 and also installs keras, this could potentially be useful to a person setting up their own box (I try to maintain it and it has been tested by a couple of people in the course)

With regards to the hardware, I didn’t expect I would enjoy having a box of my own so much. I have automated AWS provisioning quite a bit but still there is something extremely nice if you can just open up your laptop and reconnect to your box without any further ado. I think this convenience is really valuable, and the fact that you can have notebooks open and can resume working on them throughout the day, etc.

Having said that, I went for 1080ti but not sure this was the best choice for me. It is definitely quite pricey and there is still a long way till I can use it’s full potential. I am thinking a 1070 might be the sweet spot with how much compute it offers and how affordable it is, at least in Poland I could have picked it up for 50% the price of a 1080ti.

I mention this because I don’t think you need a top end card to be able to benefit a lot from having a DL box. Thus far my experience is that once you remove the AWS overhead, it is much easier to experiment and I think this is what much of the learning I will do will be about, at least for next couple of weeks. Probably had I had any GPU to speak of I wouldn’t have even considered getting a new one.

Maybe this will change later on but at least for now this is how I see it and should you have a GPU with any reasonable amount of RAM, I think you will be in for a real treat if you work on a set up of your own vs only relying on AWS

EDIT: The consensus among more experienced folks seem to be to go for the biggest and the greatest GPU you can reasonably afford, so please take my comments with a grain of salt

I agree with that, but I wouldn’t buy something more expensive than a 1080ti - I don’t think it’s worth it. If you’re lucky enough to have more money than that, buy a 2nd one!

Would you say the RAM is the biggest reason to get the 1080Ti vs the 1070? I mean how big of a difference is the actual speed for those two GPUs?

Yes absolutely the RAM!

Ok, that is what I thought. Thanks for clarifying.

The 1080 Ti should be about 40% faster than the 1070 for CNN workloads based on the benchmarks I’ve looked into.

The new Pascal 1070 Ti was designed to slot in between the Maxwell 1070 and 1080 in terms of performance, so we should expect it to be somewhere around 65% of the speed of a 1080 Ti.

| 1070 | 1070 Ti | 1080 | 1080 Ti | |

|---|---|---|---|---|

| Cuda Cores | 1920 | 2432 | 2560 | 3584 |

| FP32 (TFLOPS) | 6.5 | 8.1 | 9.0 | 11.5 |

| Memory Speed (Gbps) | 8 | 8 | 11 | 11 |

| Memory Bandwidth (GBps) | 256 | 256 | 352 | 484 |

| Rel CNN Perf | 0.6 | 0.7 | 1.0 |

I did not understand your post until I faced this need. Guys, ngrok is amazing if you dont have static IP- it allows you to expose a web server running on your local machine to the internet. All you need to do is to setup jupyter notebook security, run it on your DL box and open with ngrok the same port. You can get public URL to your DL box through WEB site. So to ensure you dont lose access to your home server:

- setup PC auto turn on (in case something happened)

- auto launch jupyter notebook

- auto launch ngrok

Nice. I’ve been meaning to set up reverse ssh tunneling. This seems much simpler.

There’s also the open source frp which some folks on HN recommended. Older hn discussion here

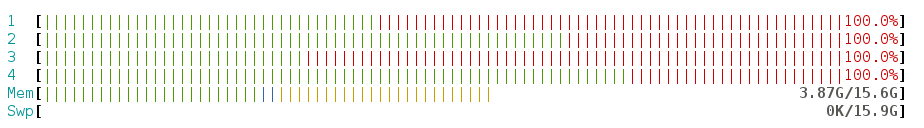

Fun fact! With an Intel Core i5-7500, 3.4GHz, 6MB CPU running the lesson 1 notebook (with data augmentation) it maxes out the CPU cores:

While the GPU sits nearly idly with 1080ti  It never reached over 60 C and

It never reached over 60 C and nvidia-smi dmon showed utilization not exceeding 50%.

I think this is really useful to know when thinking of setting up your own box. Especially when it comes to random forests (which I completely didn’t foresee learning about but I am super happy about the ml1 lectures being shared!) getting a stronger CPU might not be a bad idea if you can afford it, and more ram.

If I were buying the parts again I would probably look at Ryzen, would likely completely skip bigger HDD (got a 3TB one) and would have considered getting more RAM.

Oh well - the only way to learn is through experience

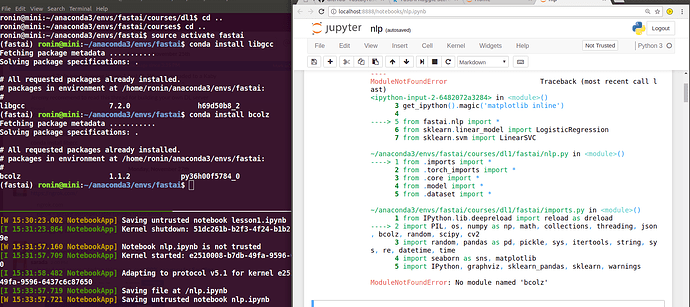

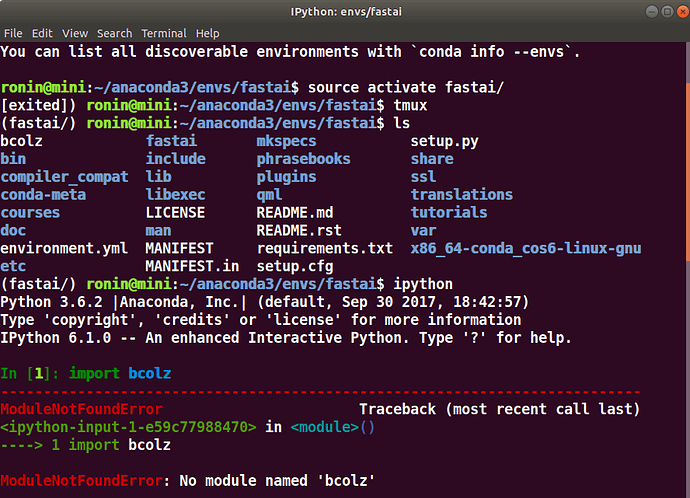

I tried to re-do everything again and followed all the installation steps. After I ran a notebook, I got an error message "No module named “bcolz”. Then, I used the command conda install bcolz. But, bcolz is already installed.

I closed everything and re-launched a terminal. The "No module named “bcolz” error message persists. Please help!

If you run the python shell from the terminal, can you import bcolz? If so maybe try killing the jupyter notebooks process and starting that again, making sure you do source activate fastai first. That sets up your environment to point to the right python

sorry I’m out of ideas

Maybe add the --force flag to conda install bcolz?

I did the same gpu load check as you yesterday (just bought a 1080 ti after reviewing all the notes on this thread again). I’m still really happy with my 6 core Ryzen, but I’d pay up for a Threadripper on my next build (supports way more ram, NVMe ssds, gpus).

IMHO you built in a good way. Having a solid GPU and not having to pay the hourly cloud rates for that - esp given how long it takes to train models as we progress, is awesome. Its comparatively cheap spin up a bunch of CPUs with reasonable ram to throw at shorter running ml models (and the aws credits will go a long way).

try

conda env list

That will show the environments on your system.

Looks kind of weird that your environment is named fastai/

Thanks a lot @rob @radek and Jeremy. Finally, it works.

Alternatively, pip install fastai is available - thread

Glad to hear it. What was the solution?