@jeremy Could u tell the specs of your Deeplearning box ?

These links might help, please note that some are like 12 months old so don’t forget to check for up-to-date versions of CPU/GPU/RAM.

Thx for the help, i was just wondering about what hes running atm

In relation to building our own workstations,

could the course staff comment on what is a useable / minimal gpu for the course (is a gtx 1060 6gb enough)?

Going forward for research, if the cost isn’t a restriction (but we don’t want to spend more than needed), is there a big benefit for going to a 1080ti over a 1080 for Convolutional Autoencoders, RL, ES and GAN (or can you point to how we research the demands of various model / approach types)?

I have 4 * GTX 1080ti GPUs, which belong to the university. A GTX 1060 6GB will work fine for the course, although the 8GB version (or better still, the 1070) will be quite a bit more convenient.

The big benefit for going 1080ti over 1080 is nearly 50% more RAM, which is pretty handy. But a 1080 is still a great GPU. Buy whatever you can afford

Thanks for that. I was wondering about other parts of the CPU as well… i have sparse knowledge on them… so not sure which ones are the best…

I was wondering about the CPU - I’ve read, and the same is mentioned in the last link provided by @EricPB, that we should look carefully at CPU we get since number of PCI-e lanes supported by the processor will determine how you utilise GPUs.

Any ideas on this? I am planning to go for the Xeon E5-1620v4 but having this and motherboard that supports it increases price of the whole setup.

Thanks @jeremy.

@codeck - I’ve built a number of workstations for other optimization / simulation / machine learning work. I’ve had xeons in the past (no complaints), but am very happy with my most recent AMD box - it gave great performance for the dollar (which let me go for faster RAM and a faster SSD). Afaik, 4-8 core Ryzens max out at 64GB ram and support 2 gpus (@varmonas - the pesky PCI lanes).

If you want a box that will support more gpus, then you’d be looking at a Threadripper or Xeon. Regardless of processor (Intel or AMD), it is typically worth spending a little more on a motherboard with a better chipset, particularly if you want to support multiple GPUs at full speed, M.2 SSD, have room to expand RAM, etc.

I’m sure there’s others that will be able to contribute much better detail.

You can build a solid gtx 1070 rig for about $1200+tax, maybe less if you shop around:

https://pcpartpicker.com/list/9ZHJhq

Two 1080tis and upgraded components go for around $3,000

https://pcpartpicker.com/list/wwHJhq

If I were just starting I would go with a 1070 and then add a Volta Titan X which should be out next year.

I’m hoping for a backpackable box. Perhaps a mini-ITX built around https://www.zotac.com/us/page/zotac-geforce-gtx-1080-ti-arcticstorm-mini GPU. The fallback is a luggable notebook.

Here’s another “Build a DL box from scratch” by a former online student of Fast.ai Part 1 & 2 (check his posts about learning DS on his own, and landing a job, btw).

1,000 CAN $ = 800 US$ approx.

No need to go crazy with multi-GPU’s, 8-cores/16-threads CPUs, 64Gb RAM: there are some very good competitors, winning medals, on Kaggle who use a 4-cores CPU, 16 Gb RAM and a GTX 1060 6Gb.

Today, the AMD Ryzen 5 series is a great spot to start your build, with 4-cores/8-threads.

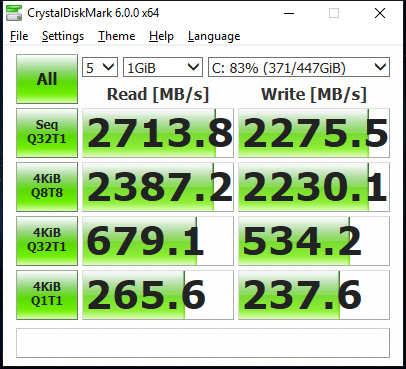

I wouldn’t bother getting the expensive Xeon series. It makes everything more expensive for no good reason. Instead get the fastest consumer CPU you can reasonably afford. Also, plenty of RAM is helpful, and even more important is a fast SSD drive (or better still, NVMe).

You could check out my specs https://pcpartpicker.com/list/Dwcpcc

and also the software stacks https://medium.com/towards-data-science/my-first-step-for-deep-learning-adventure-with-udacity-and-coursera-ee135042ac1e

Currently using core i3 and 1070 8GB

I’ve quit trying to find a personal DL laptop and am now focusing on a DL desktop because performance and pricing is much better.

I’m now focusing on a Intel 8700K system: https://pcpartpicker.com/user/bsalita/saved/W3Tm8d

I’m backordered on a Intel Optane SSD 900P (480GB, AIC PCIe 4.0, 20nm, 3D XPoint)1 which labels NVMe as so 2016. Tom’s Hardware review of Intel Optane 900P 3D XPoint: http://www.tomshardware.com/reviews/intel-optane-ssd-900p-3d-xpoint,5292-3.html

I’m thinking about buying an Akitio Node for $250 and putting a Nvidia GTX 1080 Ti into it then connecting it to my Macbook via Thunderbolt.

It won’t be as fast as a direct connection, but it will allow me to move it between computers and let me stay in MacOS where all my dev tools live.

In all honesty, I think that for many (most? all?) applications, especially at the learning stage we currently are, speed is secondary. If you can afford the solution you describe and believe this will give you the environment that you will feel most comfortable with and thus translate into productivity boost for learning / experimentation then by all means you should go for it!

The only thing I would investigate before the purchase though is whether this works with CUDA8 on MacOS, or better yet - if anyone had any success with running pytorch on it.I assume the answer is yes - so if you can confirm that I think you are good to go

So interesting that a pursue of our technical development can provide us with reasons for buying such nice toys

I already have a home Linux machine with an old GeForce in working state. It is just rather slow - training for lesson 1 takes 10+ mins and I have to be careful about memory. The graphics card was already due for a replacement. I also happen to have a much faster iMac that I’d rather hook it up to that I also use for various Adobe products that will happily utilize the eGPU as well.

People have managed to get CUDA & PyTorch running on MacOS. I’m not terribly worried about that even if I have to patch things myself. I played around with CUDA on an old 2013 MacBook Pro with a built-in Nvidia GT 650M ages ago.

I’d be interested to hear how you go, if you do get the external enclosure. It sounds like it should be a good option, assuming the data transfer is fast enough.

It turned out this course is much more intensive that I expected. Tons of examples by Jeremy. People are participating in tens of different competitions. I planned to use corporate AWS account (have like $100 ok budget spend for educational purposes), wrote scripts to start/stop p2 instance (data is not gone if you stop the instance instead of termination) but they are just useless as you need DL box 24/7. If one is serious about DL, you need to have your own box.

I found the following tool

http://www.ngrok.com

You download the tool and then run it with

./ngrok http 8888

Yo will see a screen with an URL to copy and that’s it

This will allow you to setup a tunnel to your personal DL box at home.

and avoid this

Seems very simple and it works with WIndows, Mac and Ubuntu

If you are inside a corporate network this may not work because of the anonymizer .

Every single time that you re-start jupyter you will have to use the new token.

Your Personal DL box will work like AWS

Not really, but at least you will have access from your laptop on the road.

Good Luck!!