When I was looking at the notebook, the jump to returning sequences was causing a bit of confusion. As teaching and explaining is a sure way to learning, I thought I would post an explanation here by going step by step of what the model is doing.

So We’ve got two places where information is going to be entering in from. The bottom of Jeremy’s graph needs to be initialized as zeros. This array of zeros has 75,110 rows of 42 columns. The number of rows correspond to the amount of full sets of 8 predictions we will be making, so it has the same amount as any of the other 8. If this doesn’t make sense, hopefully it will soon.

But, let’s just go on a character by character journey. So first Let’s take one single row of the zeros and pass it through a dense_in layer. You will remember that this will use glorot initialization and activate with relu, outputting a vector with 256 hidden nodes. Because we initialized with zero, these are still all going to be zero, go figure. So we’ve essentially changed a vector of 42 zeros into a vector of 256 zeros. Nice? Nice.

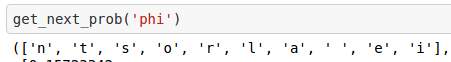

So now we’ve got some sort of hidden state of zeros. Entirely separate from this action, we pass our first character into an Input layer which then goes into an embedding layer where this character is now represented by 42 weights. These weights are initialized uniformly.

In this case, because we are using a mini-batch size of one, and Embedding layers return a 3D tensor of size (batch_size, sequence_length, output_dim), it will be of size (1, 1, 42). This embedding layer is then flattened, which has no real consequence other than to reduce the needless dimensions that were output and allow us to pass it to a dense_in layer. Again, this will take the 42 uniformly initialized latent factors and matrix multiply by glorot initialized weights into a 256 node vector.

Now–remember that zeros hidden state that we initialized? Just for shits and giggles, we’re going to pass that through another dense layer, this time a dense_hidden, which will use an identity matrix for it’s initialized weights and output the same vector of 256 zeros. Why? Because it’s in the code. And Hinton says so.

This new vector of 256 zeros (which is the same as the old vector of 256 zeros) will then be merged with the aforementioned character vector of 256 values (recall: created by multiplying the 42 uniformly initialized embedding weights with the glorot initialized weights from the dense-in layer before going through relu) by adding the two vectors together. I think it is helpful to think of this as a completed hidden layer for the first character. This layer will be the layer which continually gains “state” and will apply to all subsequent characters.

So, this hidden state is going to be preserved for the next character, but before we get to that, we need to estimate the first output, which is guessing the second character off of the context of the first. To achieve this, we pass it through a dense_out layer, with glorot initialized weights, and use a softmax function to estimate the next character. This Softmax dense_out layer ONLY BELONGS TO THE SECOND CHARACTER GUESS. All associated weights will only affect the first guess.

It can then back propagate to try to reduce it’s error. So now these initialized weights will have been changed a bit in, hopefully, the right direction. The backprop that will have occurred for the first characters’ layers will not have had any affect on the subsequent characters’ layers, except for some of the context that is building up in the recurrent hidden layer.

Alright, now character two is going to go through its own embedding layer, again with its own initialization, which is then passed to its own glorot initialized, relu activated dense_in layer just like char one was.

The PREVIOUSLY MERGED hidden layer from the first character with zeros will go through its own dense_hidden layer with relu activation and identity initialization. It then merge again with the output of the second character’s embedding having gone through it’s dense_in.

A second output layer, unique to predicting the 3rd character given the first two, with glorot initialized weights, will use softmax again to predict the 3rd character. Backprop ensues.

The third character comes in, goes through dense_in, the previously merged hidden goes through another hidden dense layer with identity initialization. Why? Who knows. These two merge again. Another unique output layer predicts the 4th character, and backpropagates.

So on, so forth.

Now, when the characters go through again, they will go through the exact same process, but have had lots of slightly tuned parameters from each pass through and continue learning in this way. Bear in mind that the hidden state will be reinitialized with zeros. So the state that has built up from the characters being input will be lost, but the weights in the embeddings and other layers will have moved.

And that, I sure as hell hope, is how the “returning sequences” section works.

, but for the sake of anyone else wondering this same thing, Jeremy was switching between 2 different notebooks-- char-rnn and char-dnn. All of the code in char-dnn can be found in the Lesson 6 Jupyter Notebook.

, but for the sake of anyone else wondering this same thing, Jeremy was switching between 2 different notebooks-- char-rnn and char-dnn. All of the code in char-dnn can be found in the Lesson 6 Jupyter Notebook.